Covariate-informed Representation Learning with Samplewise Optimal Identifiable Variational Autoencoders

Paper and Code

Feb 16, 2022

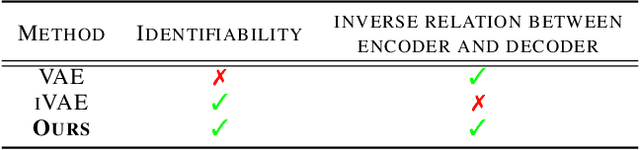

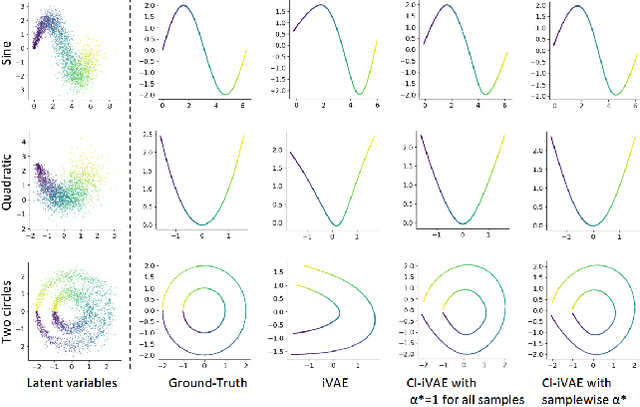

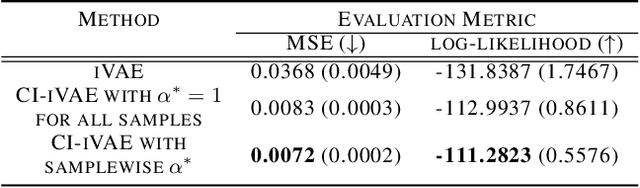

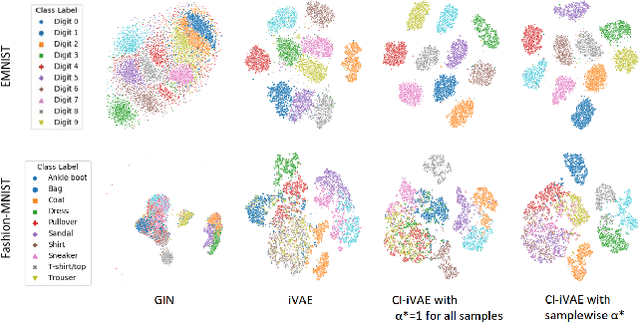

Recently proposed identifiable variational autoencoder (iVAE, Khemakhem et al. (2020)) framework provides a promising approach for learning latent independent components of the data. Although the identifiability is appealing, the objective function of iVAE does not enforce the inverse relation between encoders and decoders. Without the inverse relation, representations from the encoder in iVAE may not reconstruct observations,i.e., representations lose information in observations. To overcome this limitation, we develop a new approach, covariate-informed identifiable VAE (CI-iVAE). Different from previous iVAE implementations, our method critically leverages the posterior distribution of latent variables conditioned only on observations. In doing so, the objective function enforces the inverse relation, and learned representation contains more information of observations. Furthermore, CI-iVAE extends the original iVAE objective function to a larger class and finds the optimal one among them, thus providing a better fit to the data. Theoretically, our method has tighter evidence lower bounds (ELBOs) than the original iVAE. We demonstrate that our approach can more reliably learn features of various synthetic datasets, two benchmark image datasets (EMNIST and Fashion MNIST), and a large-scale brain imaging dataset for adolescent mental health research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge