Coupling Symbolic Reasoning with Language Modeling for Efficient Longitudinal Understanding of Unstructured Electronic Medical Records

Paper and Code

Aug 07, 2023

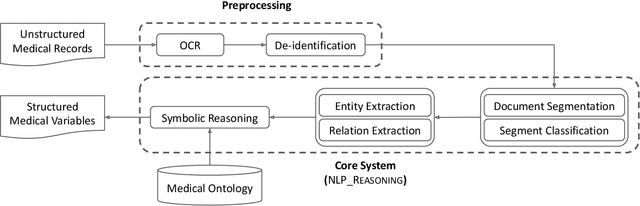

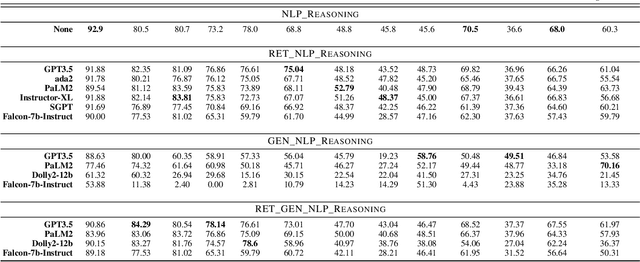

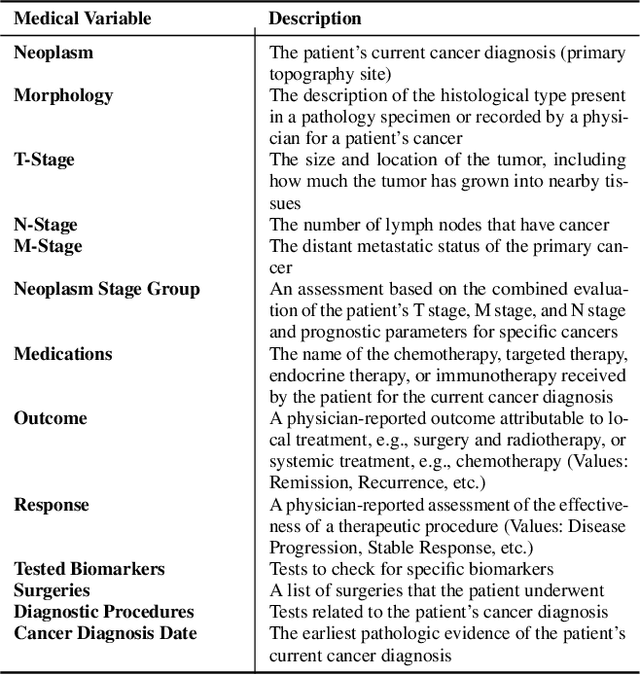

The application of Artificial Intelligence (AI) in healthcare has been revolutionary, especially with the recent advancements in transformer-based Large Language Models (LLMs). However, the task of understanding unstructured electronic medical records remains a challenge given the nature of the records (e.g., disorganization, inconsistency, and redundancy) and the inability of LLMs to derive reasoning paradigms that allow for comprehensive understanding of medical variables. In this work, we examine the power of coupling symbolic reasoning with language modeling toward improved understanding of unstructured clinical texts. We show that such a combination improves the extraction of several medical variables from unstructured records. In addition, we show that the state-of-the-art commercially-free LLMs enjoy retrieval capabilities comparable to those provided by their commercial counterparts. Finally, we elaborate on the need for LLM steering through the application of symbolic reasoning as the exclusive use of LLMs results in the lowest performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge