Cooperative learning for multi-view analysis

Paper and Code

Jan 06, 2022

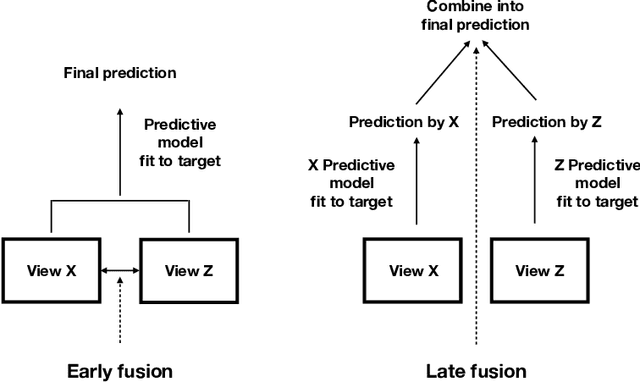

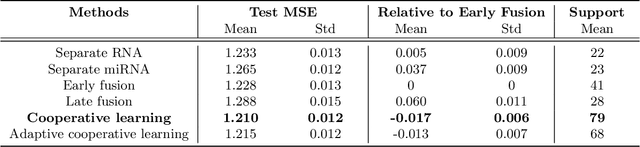

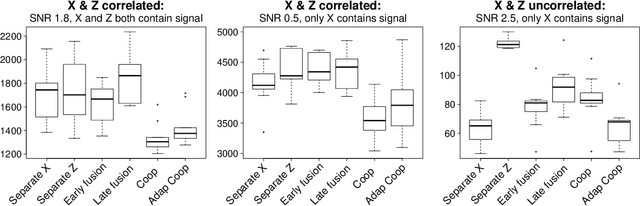

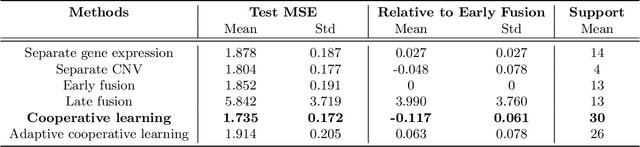

We propose a new method for supervised learning with multiple sets of features ("views"). Cooperative learning combines the usual squared error loss of predictions with an "agreement" penalty to encourage the predictions from different data views to agree. By varying the weight of the agreement penalty, we get a continuum of solutions that include the well-known early and late fusion approaches. Cooperative learning chooses the degree of agreement (or fusion) in an adaptive manner, using a validation set or cross-validation to estimate test set prediction error. One version of our fitting procedure is modular, where one can choose different fitting mechanisms (e.g. lasso, random forests, boosting, neural networks) appropriate for different data views. In the setting of cooperative regularized linear regression, the method combines the lasso penalty with the agreement penalty. The method can be especially powerful when the different data views share some underlying relationship in their signals that we aim to strengthen, while each view has its idiosyncratic noise that we aim to reduce. We illustrate the effectiveness of our proposed method on simulated and real data examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge