ConvNets for Counting: Object Detection of Transient Phenomena in Steelpan Drums

Paper and Code

Feb 01, 2021

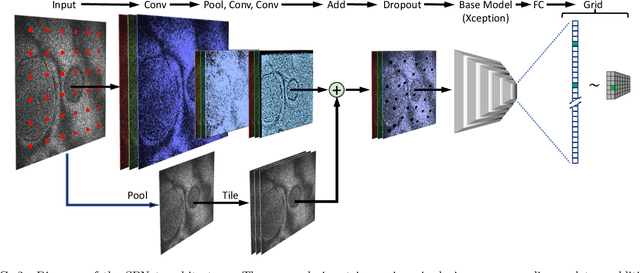

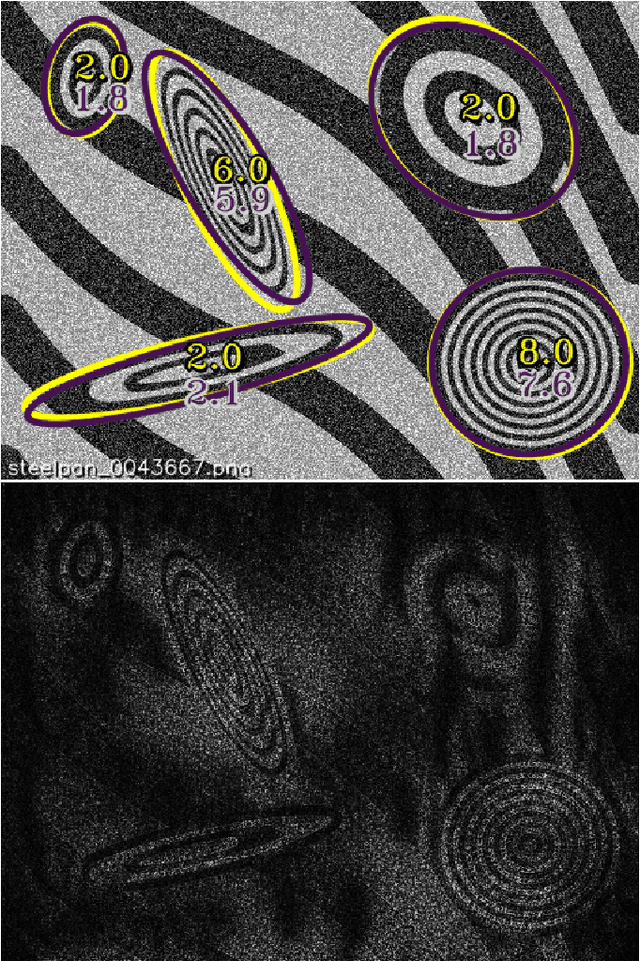

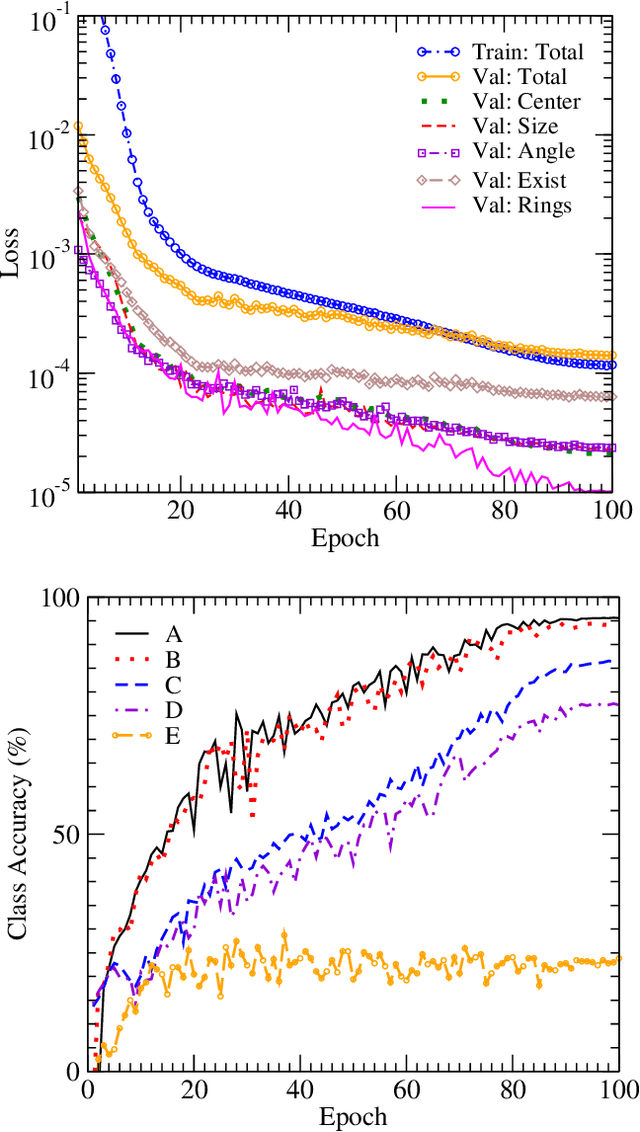

We train an object detector built from convolutional neural networks to count interference fringes in elliptical antinode regions visible in frames of high-speed video recordings of transient oscillations in Caribbean steelpan drums illuminated by electronic speckle pattern interferometry (ESPI). The annotations provided by our model, "SPNet" are intended to contribute to the understanding of time-dependent behavior in such drums by tracking the development of sympathetic vibration modes. The system is trained on a dataset of crowdsourced human-annotated images obtained from the Zooniverse Steelpan Vibrations Project. Due to the relatively small number of human-annotated images, we also train on a large corpus of synthetic images whose visual properties have been matched to those of the real images by using a Generative Adversarial Network to perform style transfer. Applying the model to predict annotations of thousands of unlabeled video frames, we can track features and measure oscillations consistent with audio recordings of the same drum strikes. One surprising result is that the machine-annotated video frames reveal transitions between the first and second harmonics of drum notes that significantly precede such transitions present in the audio recordings. As this paper primarily concerns the development of the model, deeper physical insights await its further application.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge