Convergence of SGD with momentum in the nonconvex case: A novel time window-based analysis

Paper and Code

May 27, 2024

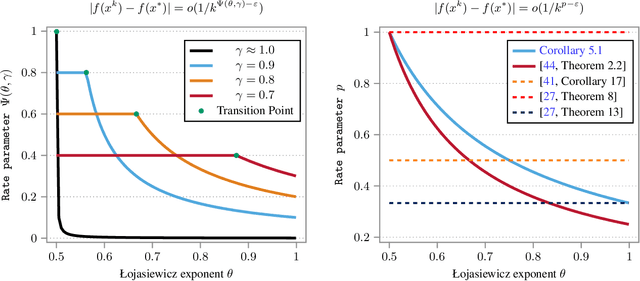

We propose a novel time window-based analysis technique to investigate the convergence behavior of the stochastic gradient descent method with momentum (SGDM) in nonconvex settings. Despite its popularity, the convergence behavior of SGDM remains less understood in nonconvex scenarios. This is primarily due to the absence of a sufficient descent property and challenges in controlling stochastic errors in an almost sure sense. To address these challenges, we study the behavior of SGDM over specific time windows, rather than examining the descent of consecutive iterates as in traditional analyses. This time window-based approach simplifies the convergence analysis and enables us to establish the first iterate convergence result for SGDM under the Kurdyka-Lojasiewicz (KL) property. Based on the underlying KL exponent and the utilized step size scheme, we further characterize local convergence rates of SGDM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge