Continuous-Time Analysis of Adaptive Optimization and Normalization

Paper and Code

Nov 08, 2024

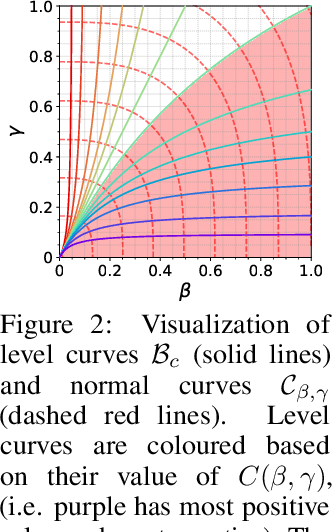

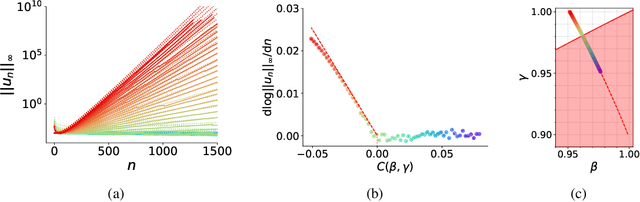

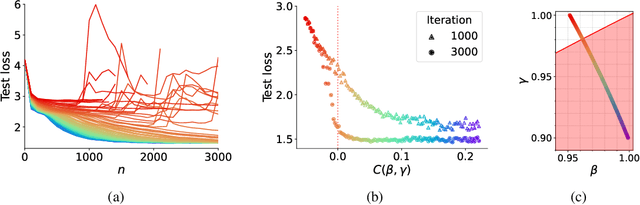

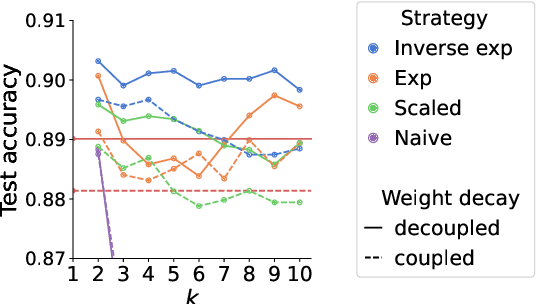

Adaptive optimization algorithms, particularly Adam and its variant AdamW, are fundamental components of modern deep learning. However, their training dynamics lack comprehensive theoretical understanding, with limited insight into why common practices - such as specific hyperparameter choices and normalization layers - contribute to successful generalization. This work presents a continuous-time formulation of Adam and AdamW, facilitating a tractable analysis of training dynamics that can shed light on such practical questions. We theoretically derive a stable region for Adam's hyperparameters $(\beta, \gamma)$ that ensures bounded updates, empirically verifying these predictions by observing unstable exponential growth of parameter updates outside this region. Furthermore, we theoretically justify the success of normalization layers by uncovering an implicit meta-adaptive effect of scale-invariant architectural components. This insight leads to an explicit optimizer, $2$-Adam, which we generalize to $k$-Adam - an optimizer that applies an adaptive normalization procedure $k$ times, encompassing Adam (corresponding to $k=1$) and Adam with a normalization layer (corresponding to $k=2$). Overall, our continuous-time formulation of Adam facilitates a principled analysis, offering deeper understanding of optimal hyperparameter choices and architectural decisions in modern deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge