Continual Prototype Evolution: Learning Online from Non-Stationary Data Streams

Paper and Code

Sep 02, 2020

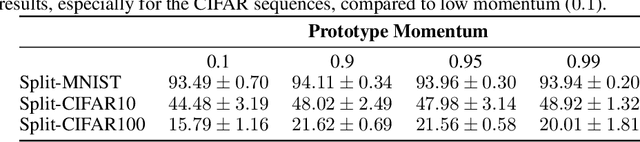

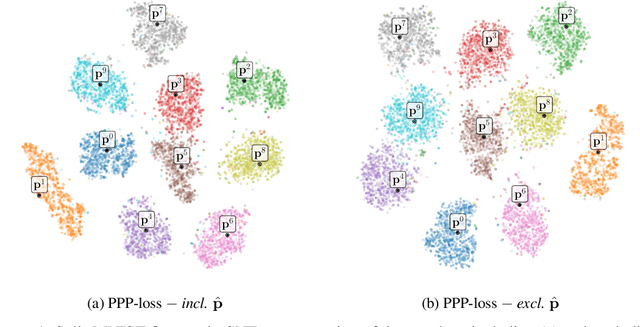

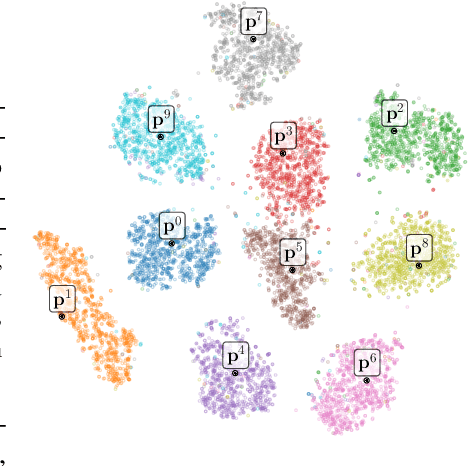

As learning from non-stationary streams of data has been proven a challenging endeavour, current continual learners often strongly relax the problem, assuming balanced datasets, unlimited processing of data stream subsets, and additional availability of task information, sometimes even during inference. In contrast, our continual learner processes the data streams in an online fashion, without additional task-information, and shows solid robustness to imbalanced data streams resembling a real-world setting. Defying such challenging settings is achieved by aggregating prototypes and nearest-neighbour based classification in a shared latent space, where a Continual Prototype Evolution (CoPE) enables learning and prediction at any point in time. As the embedding network continually changes, prototypes inevitably become obsolete, which we prevent by replay of exemplars from memory. We obtain state-of-the-art performance by a significant margin on five benchmarks, including two highly unbalanced data streams.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge