Continual Learning with Differential Privacy

Paper and Code

Oct 11, 2021

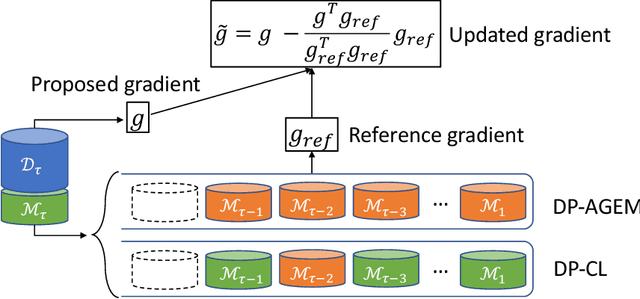

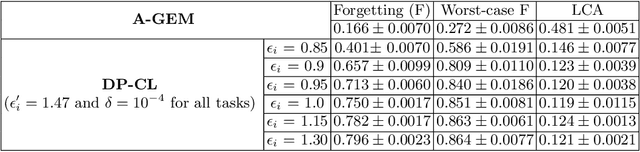

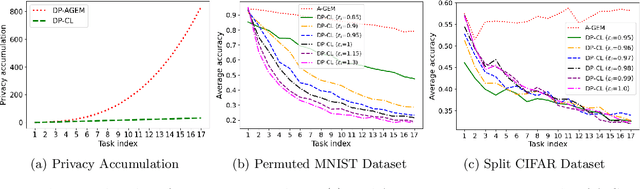

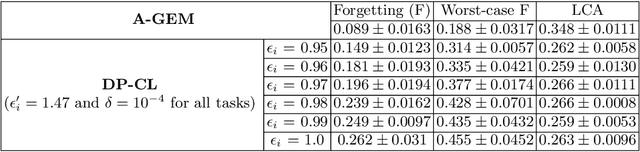

In this paper, we focus on preserving differential privacy (DP) in continual learning (CL), in which we train ML models to learn a sequence of new tasks while memorizing previous tasks. We first introduce a notion of continual adjacent databases to bound the sensitivity of any data record participating in the training process of CL. Based upon that, we develop a new DP-preserving algorithm for CL with a data sampling strategy to quantify the privacy risk of training data in the well-known Averaged Gradient Episodic Memory (A-GEM) approach by applying a moments accountant. Our algorithm provides formal guarantees of privacy for data records across tasks in CL. Preliminary theoretical analysis and evaluations show that our mechanism tightens the privacy loss while maintaining a promising model utility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge