Contextual Skipgram: Training Word Representation Using Context Information

Paper and Code

Feb 17, 2021

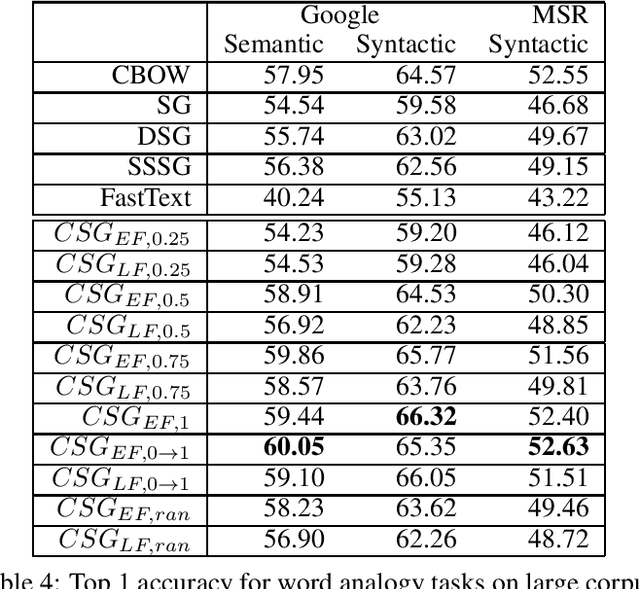

The skip-gram (SG) model learns word representation by predicting the words surrounding a center word from unstructured text data. However, not all words in the context window contribute to the meaning of the center word. For example, less relevant words could be in the context window, hindering the SG model from learning a better quality representation. In this paper, we propose an enhanced version of the SG that leverages context information to produce word representation. The proposed model, Contextual Skip-gram, is designed to predict contextual words with both the center words and the context information. This simple idea helps to reduce the impact of irrelevant words on the training process, thus enhancing the final performance

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge