Constrained Ensemble Langevin Monte Carlo

Paper and Code

Feb 08, 2021

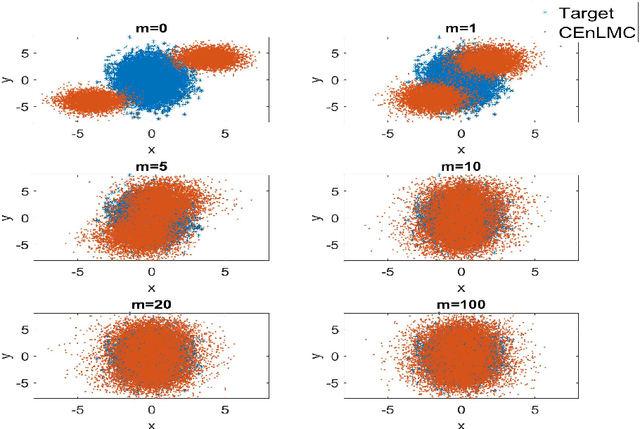

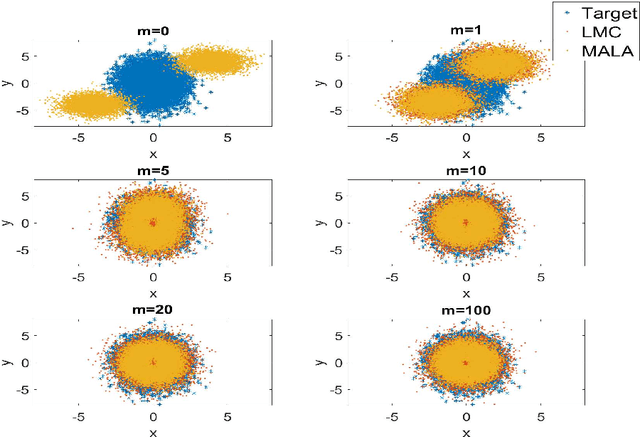

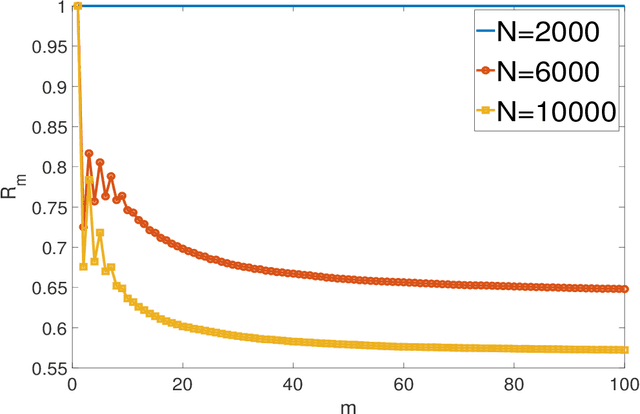

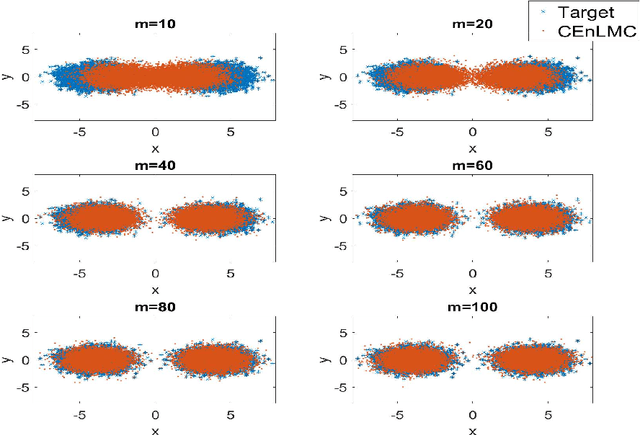

The classical Langevin Monte Carlo method looks for i.i.d. samples from a target distribution by descending along the gradient of the target distribution. It is popular partially due to its fast convergence rate. However, the numerical cost is sometimes high because the gradient can be hard to obtain. One approach to eliminate the gradient computation is to employ the concept of "ensemble", where a large number of particles are evolved together so that the neighboring particles provide gradient information to each other. In this article, we discuss two algorithms that integrate the ensemble feature into LMC, and the associated properties. There are two sides of our discovery: 1. By directly surrogating the gradient using the ensemble approximation, we develop Ensemble Langevin Monte Carlo. We show that this method is unstable due to a potentially small denominator that induces high variance. We provide a counterexample to explicitly show this instability. 2. We then change the strategy and enact the ensemble approximation to the gradient only in a constrained manner, to eliminate the unstable points. The algorithm is termed Constrained Ensemble Langevin Monte Carlo. We show that, with a proper tuning, the surrogation takes place often enough to bring the reasonable numerical saving, while the induced error is still low enough for us to maintain the fast convergence rate, up to a controllable discretization and ensemble error. Such combination of ensemble method and LMC shed light on inventing gradient-free algorithms that produce i.i.d. samples almost exponentially fast.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge