Conservative Optimistic Policy Optimization via Multiple Importance Sampling

Paper and Code

Mar 04, 2021

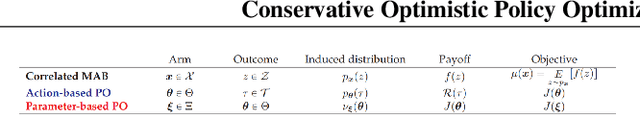

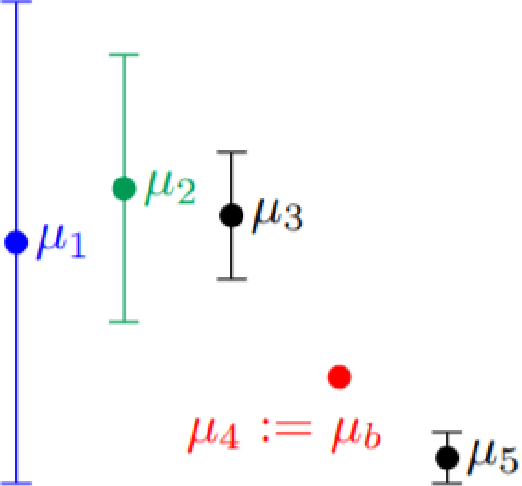

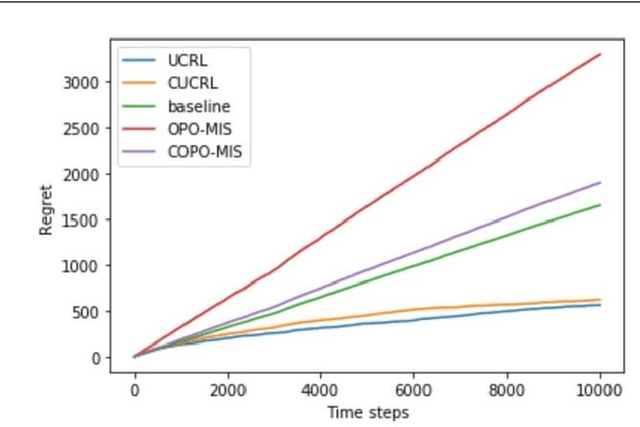

Reinforcement Learning (RL) has been able to solve hard problems such as playing Atari games or solving the game of Go, with a unified approach. Yet modern deep RL approaches are still not widely used in real-world applications. One reason could be the lack of guarantees on the performance of the intermediate executed policies, compared to an existing (already working) baseline policy. In this paper, we propose an online model-free algorithm that solves conservative exploration in the policy optimization problem. We show that the regret of the proposed approach is bounded by $\tilde{\mathcal{O}}(\sqrt{T})$ for both discrete and continuous parameter spaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge