Confederated Learning: Federated Learning with Decentralized Edge Servers

Paper and Code

May 30, 2022

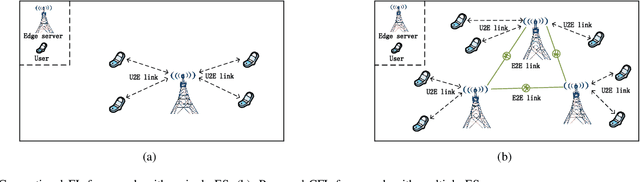

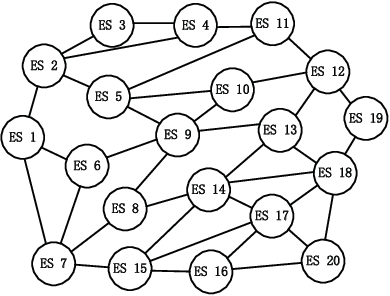

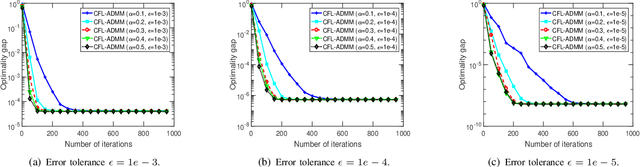

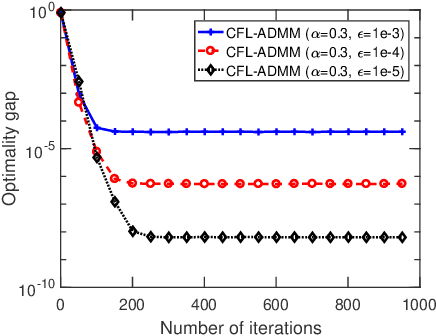

Federated learning (FL) is an emerging machine learning paradigm that allows to accomplish model training without aggregating data at a central server. Most studies on FL consider a centralized framework, in which a single server is endowed with a central authority to coordinate a number of devices to perform model training in an iterative manner. Due to stringent communication and bandwidth constraints, such a centralized framework has limited scalability as the number of devices grows. To address this issue, in this paper, we propose a ConFederated Learning (CFL) framework. The proposed CFL consists of multiple servers, in which each server is connected with an individual set of devices as in the conventional FL framework, and decentralized collaboration is leveraged among servers to make full use of the data dispersed throughout the network. We develop an alternating direction method of multipliers (ADMM) algorithm for CFL. The proposed algorithm employs a random scheduling policy which randomly selects a subset of devices to access their respective servers at each iteration, thus alleviating the need of uploading a huge amount of information from devices to servers. Theoretical analysis is presented to justify the proposed method. Numerical results show that the proposed method can converge to a decent solution significantly faster than gradient-based FL algorithms, thus boasting a substantial advantage in terms of communication efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge