Concept Bottleneck Model with Additional Unsupervised Concepts

Paper and Code

Feb 03, 2022

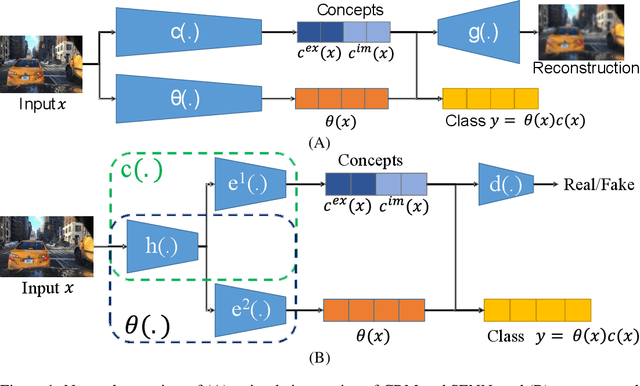

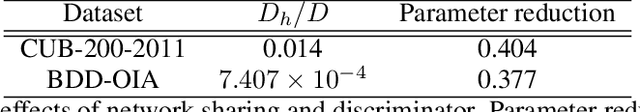

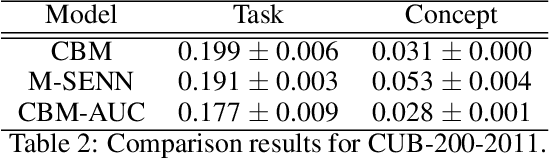

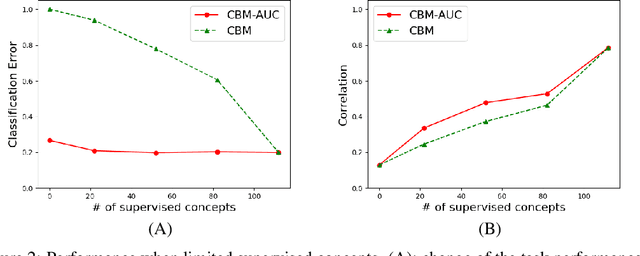

With the increasing demands for accountability, interpretability is becoming an essential capability for real-world AI applications. However, most methods utilize post-hoc approaches rather than training the interpretable model. In this article, we propose a novel interpretable model based on the concept bottleneck model (CBM). CBM uses concept labels to train an intermediate layer as the additional visible layer. However, because the number of concept labels restricts the dimension of this layer, it is difficult to obtain high accuracy with a small number of labels. To address this issue, we integrate supervised concepts with unsupervised ones trained with self-explaining neural networks (SENNs). By seamlessly training these two types of concepts while reducing the amount of computation, we can obtain both supervised and unsupervised concepts simultaneously, even for large-sized images. We refer to the proposed model as the concept bottleneck model with additional unsupervised concepts (CBM-AUC). We experimentally confirmed that the proposed model outperformed CBM and SENN. We also visualized the saliency map of each concept and confirmed that it was consistent with the semantic meanings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge