Computationally Efficient Approaches for Image Style Transfer

Paper and Code

Jul 16, 2018

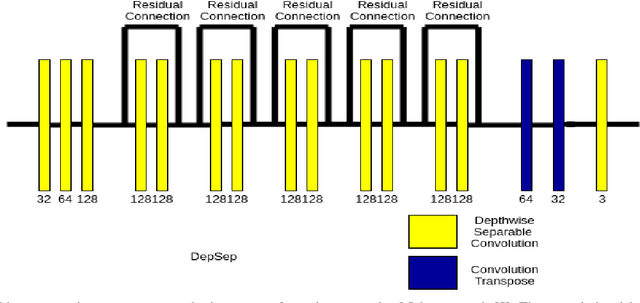

In this work, we have investigated various style transfer approaches and (i) examined how the stylized reconstruction changes with the change of loss function and (ii) provided a computationally efficient solution for the same. We have used elegant techniques like depth-wise separable convolution in place of convolution and nearest neighbor interpolation in place of transposed convolution. Further, we have also added multiple interpolations in place of transposed convolution. The results obtained are perceptually similar in quality, while being computationally very efficient. The decrease in the computational complexity of our architecture is validated by the decrease in the testing time by 26.1%, 39.1%, and 57.1%, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge