Computational complexity of Inexact Proximal Point Algorithm for Convex Optimization under Holderian Growth

Paper and Code

Aug 12, 2021

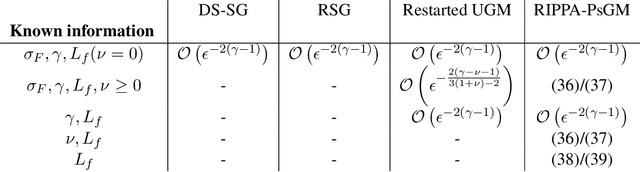

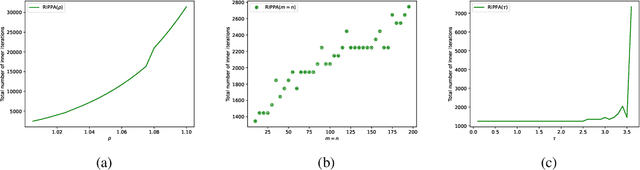

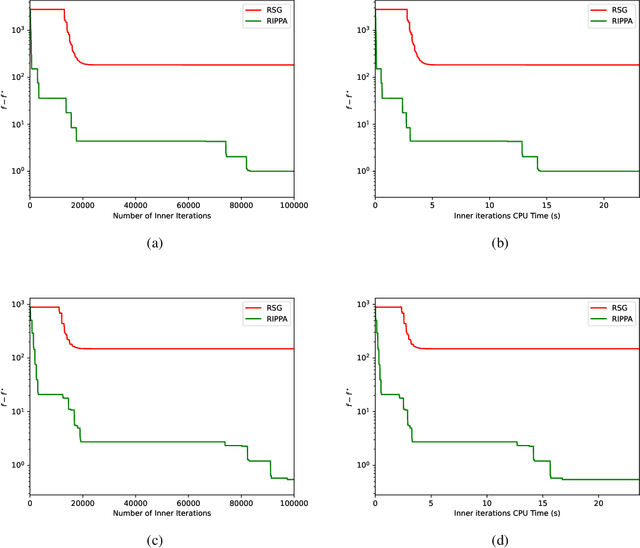

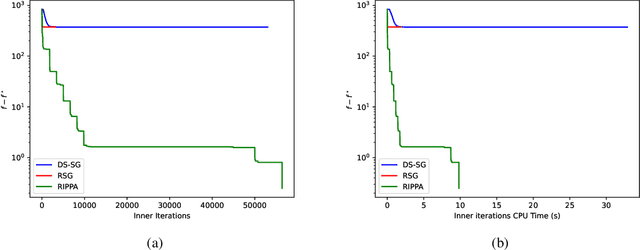

\noindent Several decades ago the Proximal Point Algorithm (PPA) stated to gain a long-lasting attraction for both abstract operator theory and numerical optimization communities. Even in modern applications, researchers still use proximal minimization theory to design scalable algorithms that overcome nonsmoothness. Remarkable works as \cite{Fer:91,Ber:82constrained,Ber:89parallel,Tom:11} established tight relations between the convergence behaviour of PPA and the regularity of the objective function. In this manuscript we derive nonasymptotic iteration complexity of exact and inexact PPA to minimize convex functions under $\gamma-$Holderian growth: $\BigO{\log(1/\epsilon)}$ (for $\gamma \in [1,2]$) and $\BigO{1/\epsilon^{\gamma - 2}}$ (for $\gamma > 2$). In particular, we recover well-known results on PPA: finite convergence for sharp minima and linear convergence for quadratic growth, even under presence of inexactness. However, without taking into account the concrete computational effort paid for computing each PPA iteration, any iteration complexity remains abstract and purely informative. Therefore, using an inner (proximal) gradient/subgradient method subroutine that computes inexact PPA iteration, we secondly show novel computational complexity bounds on a restarted inexact PPA, available when no information on the growth of the objective function is known. In the numerical experiments we confirm the practical performance and implementability of our framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge