Composite Kernel Local Angular Discriminant Analysis for Multi-Sensor Geospatial Image Analysis

Paper and Code

Jul 18, 2016

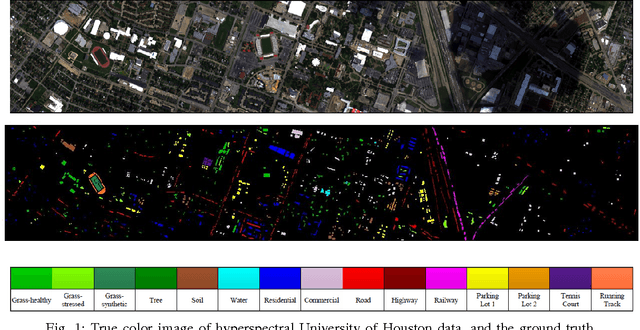

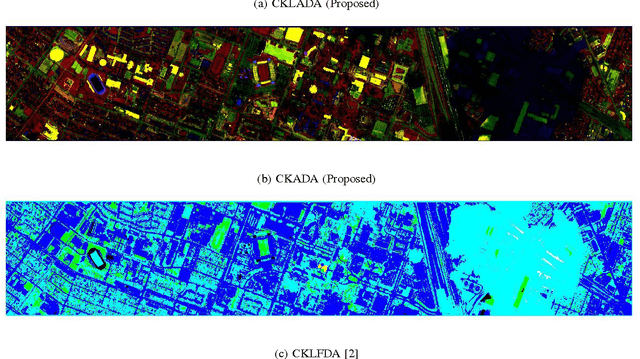

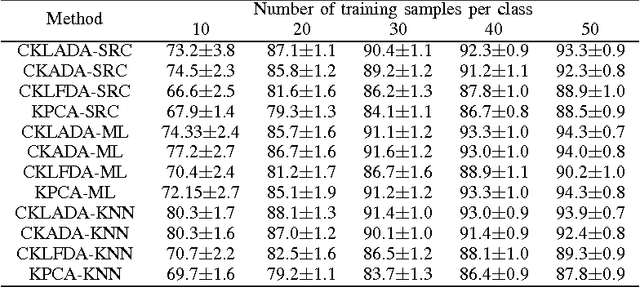

With the emergence of passive and active optical sensors available for geospatial imaging, information fusion across sensors is becoming ever more important. An important aspect of single (or multiple) sensor geospatial image analysis is feature extraction - the process of finding "optimal" lower dimensional subspaces that adequately characterize class-specific information for subsequent analysis tasks, such as classification, change and anomaly detection etc. In recent work, we proposed and developed an angle-based discriminant analysis approach that projected data onto subspaces with maximal "angular" separability in the input (raw) feature space and Reproducible Kernel Hilbert Space (RKHS). We also developed an angular locality preserving variant of this algorithm. In this letter, we advance this work and make it suitable for information fusion - we propose and validate a composite kernel local angular discriminant analysis projection, that can operate on an ensemble of feature sources (e.g. from different sources), and project the data onto a unified space through composite kernels where the data are maximally separated in an angular sense. We validate this method with the multi-sensor University of Houston hyperspectral and LiDAR dataset, and demonstrate that the proposed method significantly outperforms other composite kernel approaches to sensor (information) fusion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge