Complexity Analysis and Efficient Measurement Selection Primitives for High-Rate Graph SLAM

Paper and Code

Mar 02, 2018

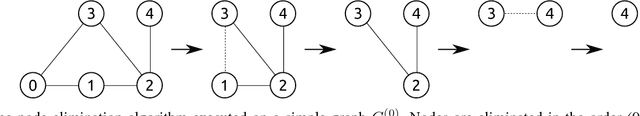

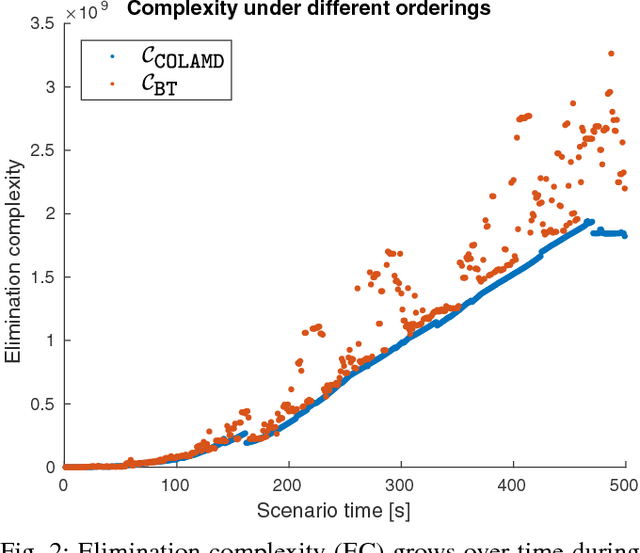

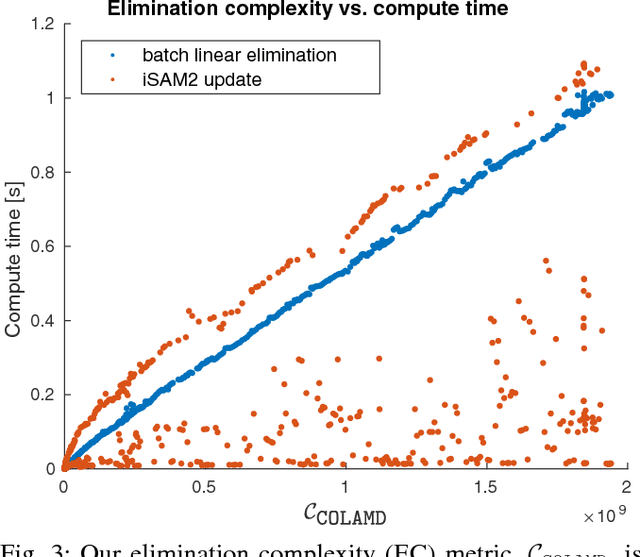

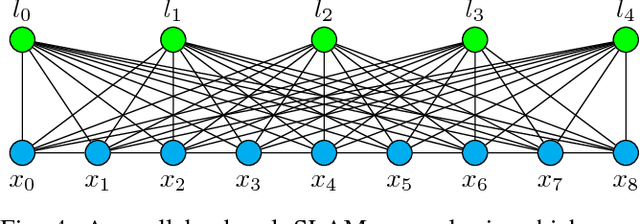

Sparsity has been widely recognized as crucial for efficient optimization in graph-based SLAM. Because the sparsity and structure of the SLAM graph reflect the set of incorporated measurements, many methods for sparsification have been proposed in hopes of reducing computation. These methods often focus narrowly on reducing edge count without regard for structure at a global level. Such structurally-naive techniques can fail to produce significant computational savings, even after aggressive pruning. In contrast, simple heuristics such as measurement decimation and keyframing are known empirically to produce significant computation reductions. To demonstrate why, we propose a quantitative metric called elimination complexity (EC) that bridges the existing analytic gap between graph structure and computation. EC quantifies the complexity of the primary computational bottleneck: the factorization step of a Gauss-Newton iteration. Using this metric, we show rigorously that decimation and keyframing impose favorable global structures and therefore achieve computation reductions on the order of $r^2/9$ and $r^3$, respectively, where $r$ is the pruning rate. We additionally present numerical results showing EC provides a good approximation of computation in both batch and incremental (iSAM2) optimization and demonstrate that pruning methods promoting globally-efficient structure outperform those that do not.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge