Competition over data: how does data purchase affect users?

Paper and Code

Jan 26, 2022

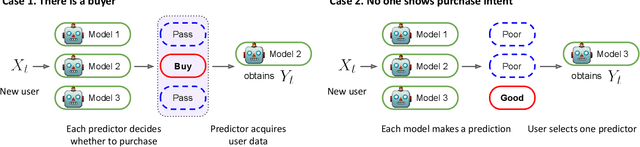

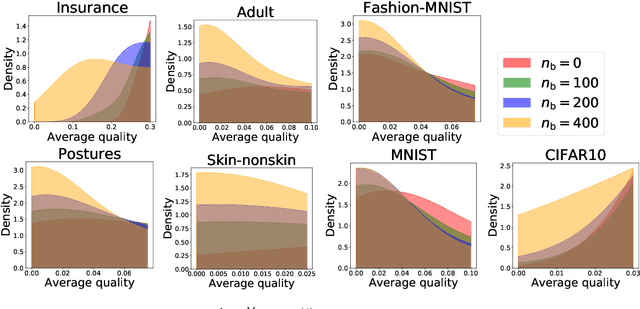

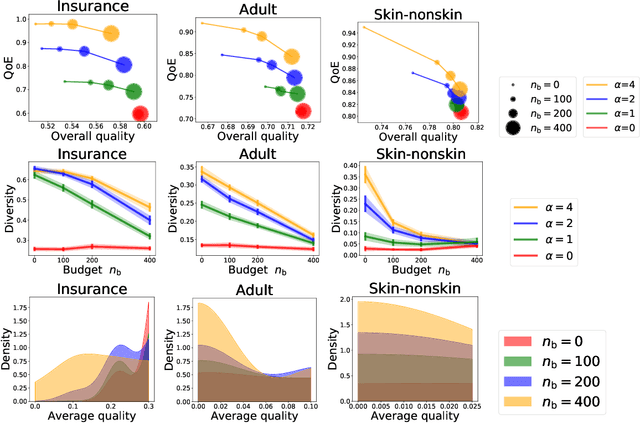

As machine learning (ML) is deployed by many competing service providers, the underlying ML predictors also compete against each other, and it is increasingly important to understand the impacts and biases from such competition. In this paper, we study what happens when the competing predictors can acquire additional labeled data to improve their prediction quality. We introduce a new environment that allows ML predictors to use active learning algorithms to purchase labeled data within their budgets while competing against each other to attract users. Our environment models a critical aspect of data acquisition in competing systems which has not been well-studied before. We found that the overall performance of an ML predictor improves when predictors can purchase additional labeled data. Surprisingly, however, the quality that users experience -- i.e. the accuracy of the predictor selected by each user -- can decrease even as the individual predictors get better. We show that this phenomenon naturally arises due to a trade-off whereby competition pushes each predictor to specialize in a subset of the population while data purchase has the effect of making predictors more uniform. We support our findings with both experiments and theories.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge