Comparison of Methods Generalizing Max- and Average-Pooling

Paper and Code

Mar 02, 2021

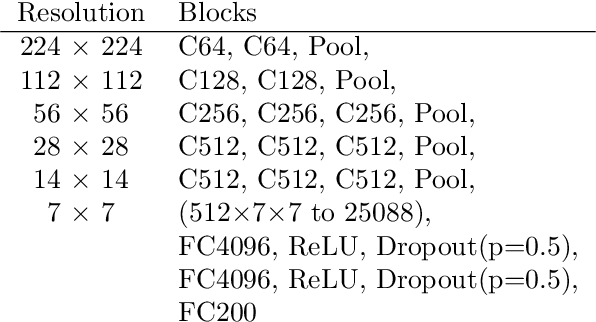

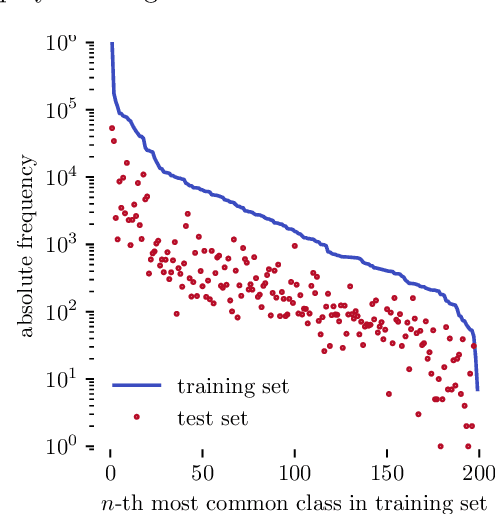

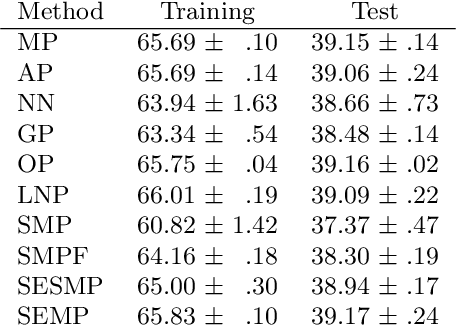

Max- and average-pooling are the most popular pooling methods for downsampling in convolutional neural networks. In this paper, we compare different pooling methods that generalize both max- and average-pooling. Furthermore, we propose another method based on a smooth approximation of the maximum function and put it into context with related methods. For the comparison, we use a VGG16 image classification network and train it on a large dataset of natural high-resolution images (Google Open Images v5). The results show that none of the more sophisticated methods perform significantly better in this classification task than standard max- or average-pooling.

* 16 pages, 6 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge