Comparison of Data Representations and Machine Learning Architectures for User Identification on Arbitrary Motion Sequences

Paper and Code

Oct 02, 2022

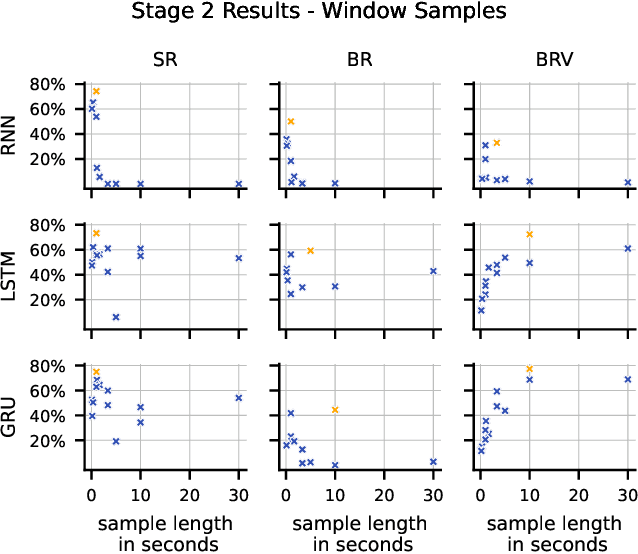

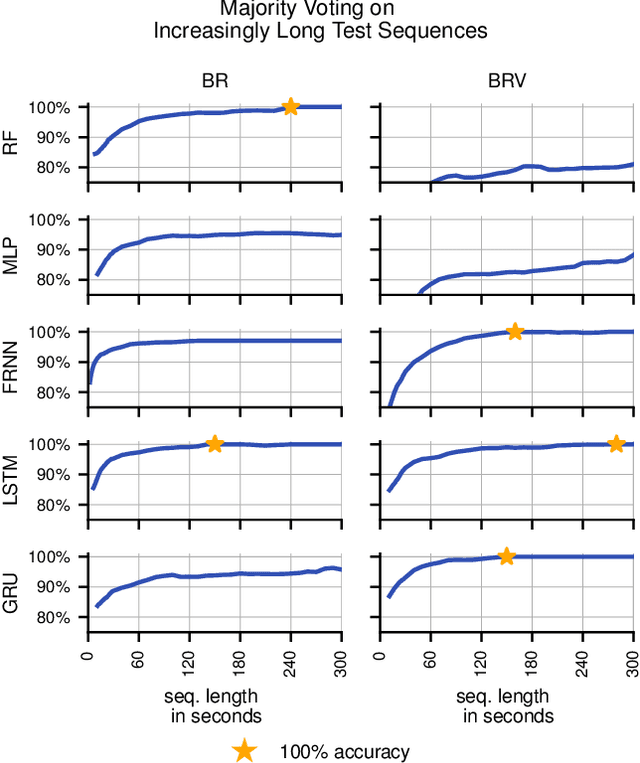

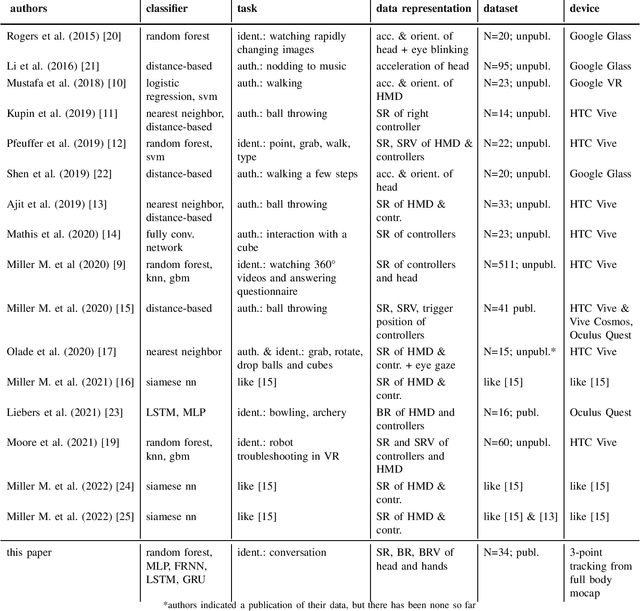

Reliable and robust user identification and authentication are important and often necessary requirements for many digital services. It becomes paramount in social virtual reality (VR) to ensure trust, specifically in digital encounters with lifelike realistic-looking avatars as faithful replications of real persons. Recent research has shown great interest in providing new solutions that verify the identity of extended reality (XR) systems. This paper compares different machine learning approaches to identify users based on arbitrary sequences of head and hand movements, a data stream provided by the majority of today's XR systems. We compare three different potential representations of the motion data from heads and hands (scene-relative, body-relative, and body-relative velocities), and by comparing the performances of five different machine learning architectures (random forest, multilayer perceptron, fully recurrent neural network, long-short term memory, gated recurrent unit). We use the publicly available dataset "Talking with Hands" and publish all our code to allow reproducibility and to provide baselines for future work. After hyperparameter optimization, the combination of a long-short term memory architecture and body-relative data outperformed competing combinations: the model correctly identifies any of the 34 subjects with an accuracy of 100\% within 150 seconds. The code for models, training and evaluation is made publicly available. Altogether, our approach provides an effective foundation for behaviometric-based identification and authentication to guide researchers and practitioners.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge