Comparing Human and Machine Deepfake Detection with Affective and Holistic Processing

Paper and Code

May 13, 2021

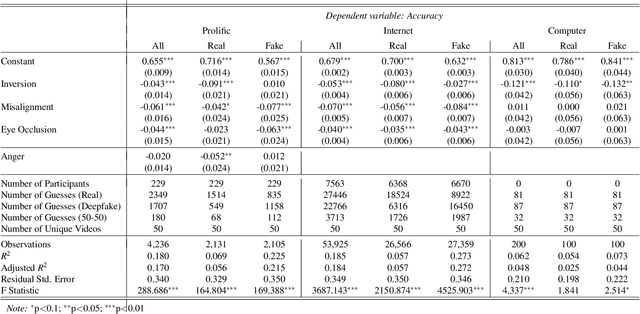

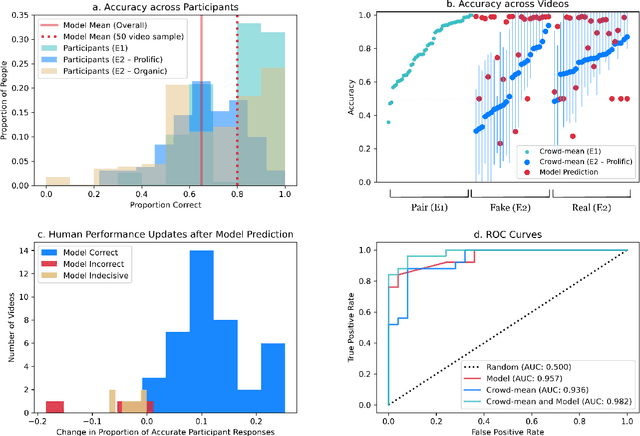

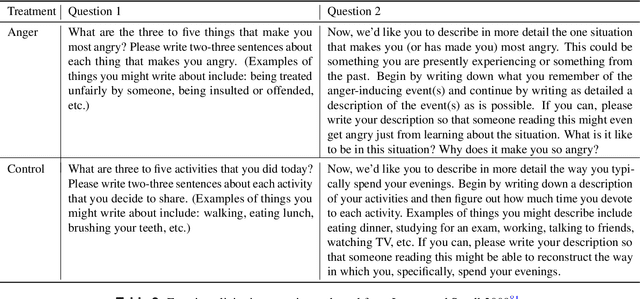

The recent emergence of deepfake videos leads to an important societal question: how can we know if a video that we watch is real or fake? In three online studies with 15,016 participants, we present authentic videos and deepfakes and ask participants to identify which is which. We compare the performance of ordinary participants against the leading computer vision deepfake detection model and find them similarly accurate while making different kinds of mistakes. Together, participants with access to the model's prediction are more accurate than either alone, but inaccurate model predictions often decrease participants' accuracy. We embed randomized experiments and find: incidental anger decreases participants' performance and obstructing holistic visual processing of faces also hinders participants' performance while mostly not affecting the model's. These results suggest that considering emotional influences and harnessing specialized, holistic visual processing of ordinary people could be promising defenses against machine-manipulated media.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge