Comparative Study of Machine Learning Models and BERT on SQuAD

Paper and Code

May 22, 2020

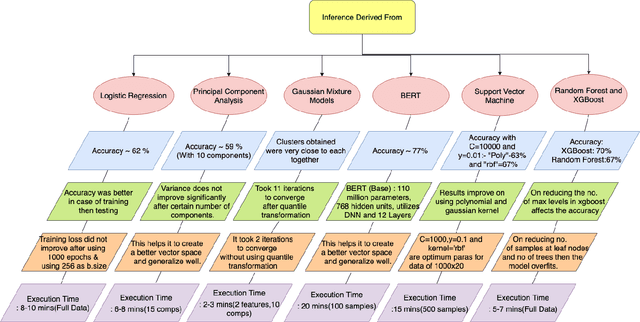

This study aims to provide a comparative analysis of performance of certain models popular in machine learning and the BERT model on the Stanford Question Answering Dataset (SQuAD). The analysis shows that the BERT model, which was once state-of-the-art on SQuAD, gives higher accuracy in comparison to other models. However, BERT requires a greater execution time even when only 100 samples are used. This shows that with increasing accuracy more amount of time is invested in training the data. Whereas in case of preliminary machine learning models, execution time for full data is lower but accuracy is compromised.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge