Comparative Probing of Lexical Semantics Theories for Cognitive Plausibility and Technological Usefulness

Paper and Code

Nov 16, 2020

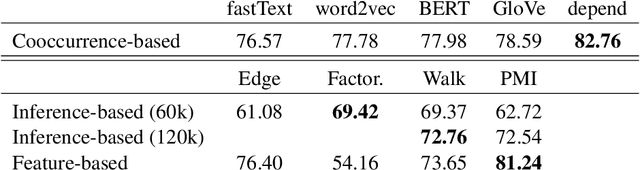

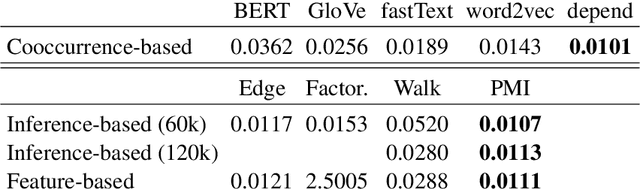

Lexical semantics theories differ in advocating that the meaning of words is represented as an inference graph, a feature mapping or a vector space, thus raising the question: is it the case that one of these approaches is superior to the others in representing lexical semantics appropriately? Or in its non antagonistic counterpart: could there be a unified account of lexical semantics where these approaches seamlessly emerge as (partial) renderings of (different) aspects of a core semantic knowledge base? In this paper, we contribute to these research questions with a number of experiments that systematically probe different lexical semantics theories for their levels of cognitive plausibility and of technological usefulness. The empirical findings obtained from these experiments advance our insight on lexical semantics as the feature-based approach emerges as superior to the other ones, and arguably also move us closer to finding answers to the research questions above.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge