Compact Multi-Class Boosted Trees

Paper and Code

Oct 31, 2017

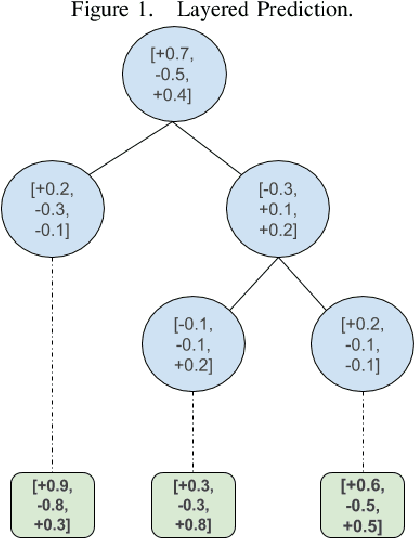

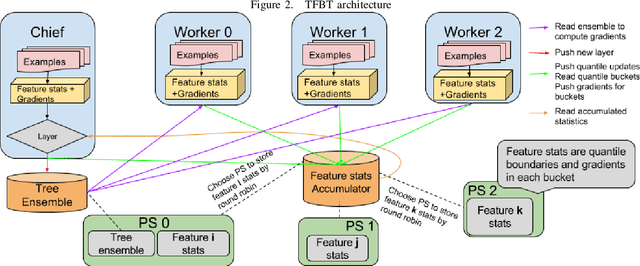

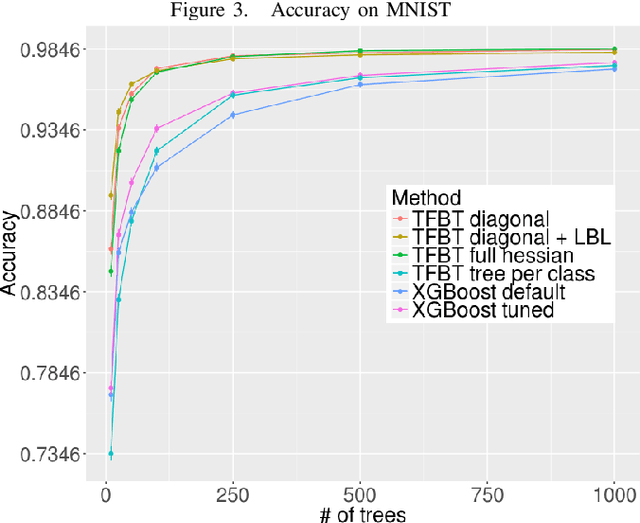

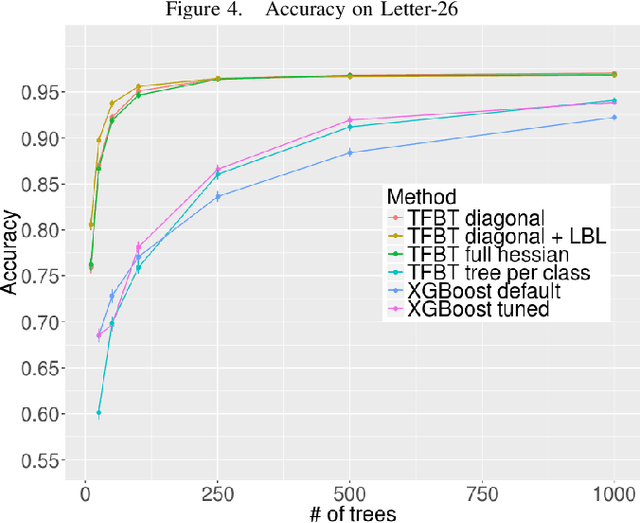

Gradient boosted decision trees are a popular machine learning technique, in part because of their ability to give good accuracy with small models. We describe two extensions to the standard tree boosting algorithm designed to increase this advantage. The first improvement extends the boosting formalism from scalar-valued trees to vector-valued trees. This allows individual trees to be used as multiclass classifiers, rather than requiring one tree per class, and drastically reduces the model size required for multiclass problems. We also show that some other popular vector-valued gradient boosted trees modifications fit into this formulation and can be easily obtained in our implementation. The second extension, layer-by-layer boosting, takes smaller steps in function space, which is empirically shown to lead to a faster convergence and to a more compact ensemble. We have added both improvements to the open-source TensorFlow Boosted trees (TFBT) package, and we demonstrate their efficacy on a variety of multiclass datasets. We expect these extensions will be of particular interest to boosted tree applications that require small models, such as embedded devices, applications requiring fast inference, or applications desiring more interpretable models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge