Compact Compositional Models

Paper and Code

Oct 29, 2016

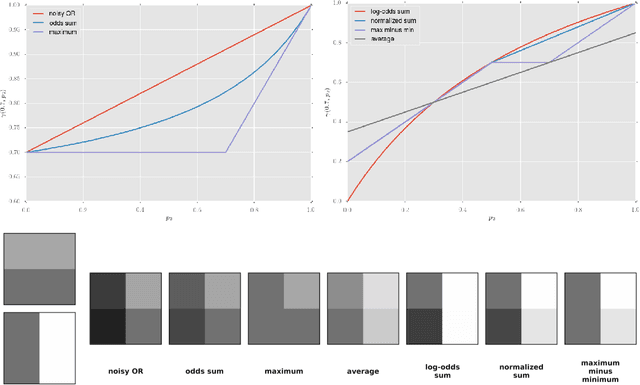

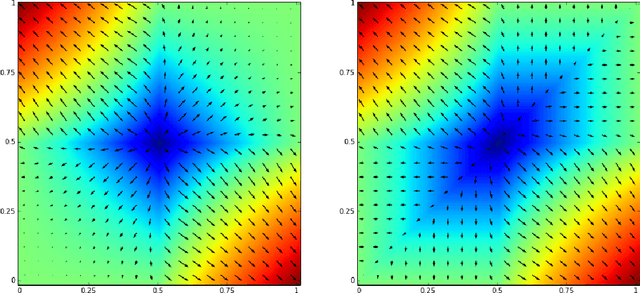

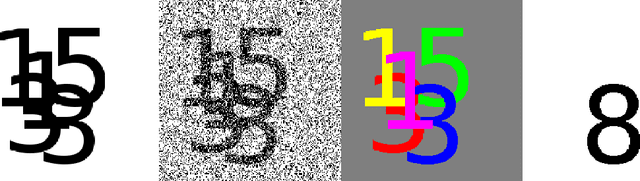

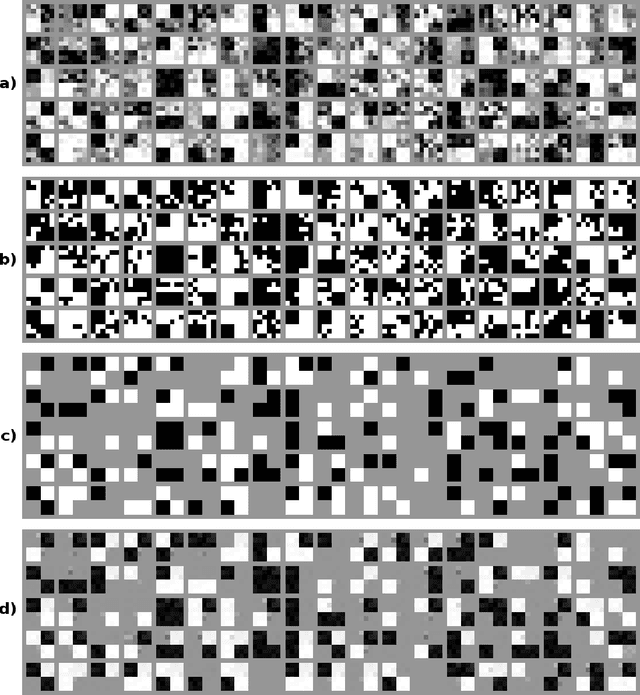

Learning compact and interpretable representations is a very natural task, which has not been solved satisfactorily even for simple binary datasets. In this paper, we review various ways of composing experts for binary data and argue that competitive forms of interaction are best suited to learn low-dimensional representations. We propose a new composition rule that discourages experts from focusing on similar structures and that penalizes opposing votes strongly so that abstaining from voting becomes more attractive. We also introduce a novel sequential initialization procedure, which is based on a process of oversimplification and correction. Experiments show that with our approach very intuitive models can be learned.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge