Combining Stochastic Adaptive Cubic Regularization with Negative Curvature for Nonconvex Optimization

Paper and Code

Jun 27, 2019

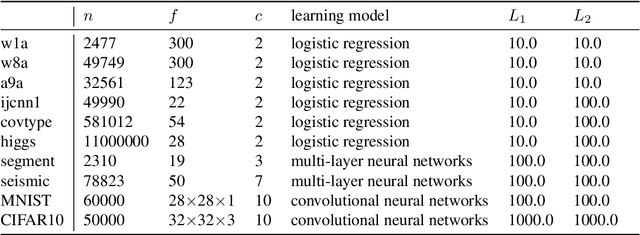

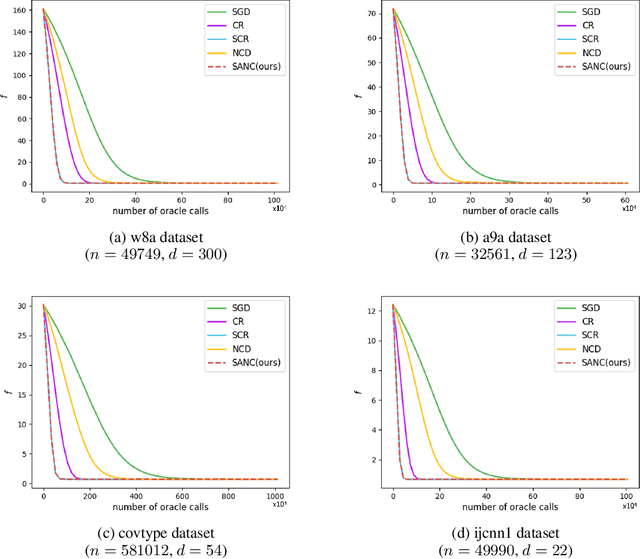

We focus on minimizing nonconvex finite-sum functions that typically arise in machine learning problems. In an attempt to solve this problem, the adaptive cubic regularized Newton method has shown its strong global convergence guarantees and ability to escape from strict saddle points. This method uses a trust region-like scheme to determine if an iteration is successful or not, and updates only when it is successful. In this paper, we suggest an algorithm combining negative curvature with the adaptive cubic regularized Newton method to update even at unsuccessful iterations. We call this new method Stochastic Adaptive cubic regularization with Negative Curvature (SANC). Unlike the previous method, in order to attain stochastic gradient and Hessian estimators, the SANC algorithm uses independent sets of data points of consistent size over all iterations. It makes the SANC algorithm more practical to apply for solving large-scale machine learning problems. To the best of our knowledge, this is the first approach that combines the negative curvature method with the adaptive cubic regularized Newton method. Finally, we provide experimental results including neural networks problems supporting the efficiency of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge