Collision Avoidance for Multiple UAVs in Unknown Scenarios with Causal Representation Disentanglement

Paper and Code

Jul 04, 2024

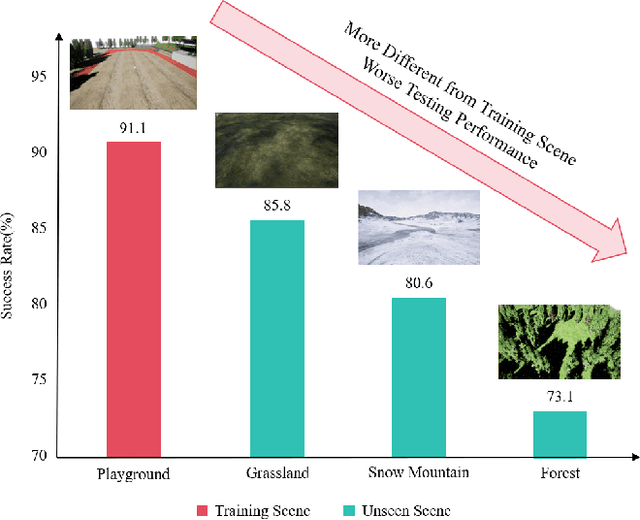

Deep reinforcement learning (DRL) has achieved remarkable progress in online path planning tasks for multi-UAV systems. However, existing DRL-based methods often suffer from performance degradation when tackling unseen scenarios, since the non-causal factors in visual representations adversely affect policy learning. To address this issue, we propose a novel representation learning approach, \ie, causal representation disentanglement, which can identify the causal and non-causal factors in representations. After that, we only pass causal factors for subsequent policy learning and thus explicitly eliminate the influence of non-causal factors, which effectively improves the generalization ability of DRL models. Experimental results show that our proposed method can achieve robust navigation performance and effective collision avoidance especially in unseen scenarios, which significantly outperforms existing SOTA algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge