Collaborative Information Bottleneck

Paper and Code

Nov 17, 2017

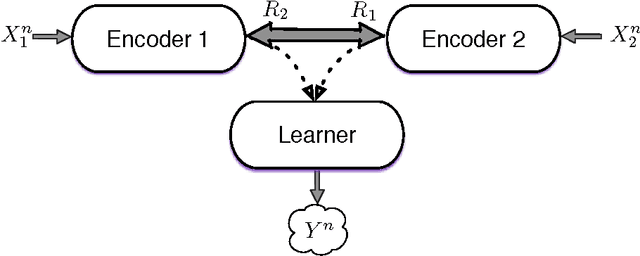

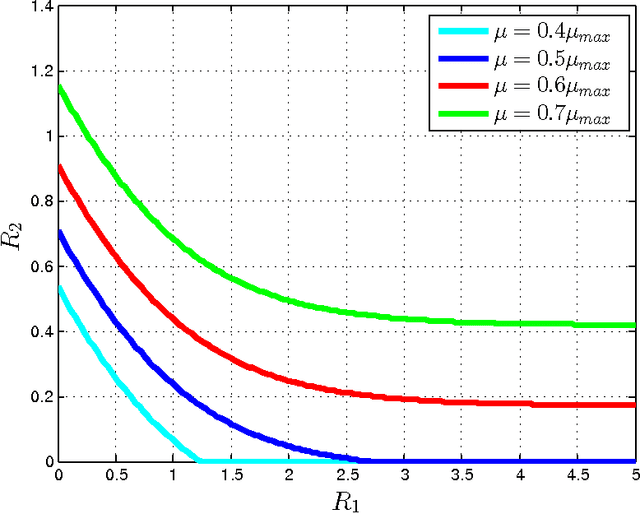

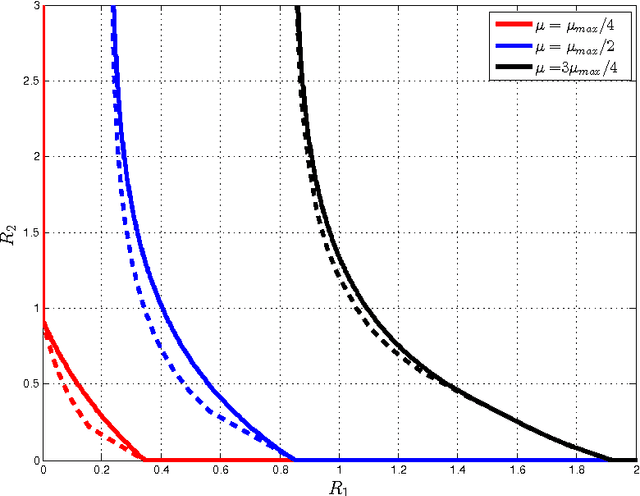

This paper investigates a multi-terminal source coding problem under a logarithmic loss fidelity which does not necessarily lead to an additive distortion measure. The problem is motivated by an extension of the Information Bottleneck method to a multi-source scenario where several encoders have to build cooperatively rate-limited descriptions of their sources in order to maximize information with respect to other unobserved (hidden) sources. More precisely, we study fundamental information-theoretic limits of the so-called: (i) Two-way Collaborative Information Bottleneck (TW-CIB) and (ii) the Collaborative Distributed Information Bottleneck (CDIB) problems. The TW-CIB problem consists of two distant encoders that separately observe marginal (dependent) components $X_1$ and $X_2$ and can cooperate through multiple exchanges of limited information with the aim of extracting information about hidden variables $(Y_1,Y_2)$, which can be arbitrarily dependent on $(X_1,X_2)$. On the other hand, in CDIB there are two cooperating encoders which separately observe $X_1$ and $X_2$ and a third node which can listen to the exchanges between the two encoders in order to obtain information about a hidden variable $Y$. The relevance (figure-of-merit) is measured in terms of a normalized (per-sample) multi-letter mutual information metric (log-loss fidelity) and an interesting tradeoff arises by constraining the complexity of descriptions, measured in terms of the rates needed for the exchanges between the encoders and decoders involved. Inner and outer bounds to the complexity-relevance region of these problems are derived from which optimality is characterized for several cases of interest. Our resulting theoretical complexity-relevance regions are finally evaluated for binary symmetric and Gaussian statistical models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge