Collaborative Graph Contrastive Learning: Data Augmentation Composition May Not be Necessary for Graph Representation Learning

Paper and Code

Nov 05, 2021

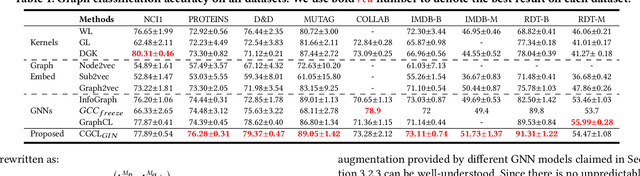

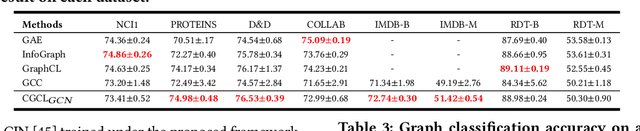

Unsupervised graph representation learning is a non-trivial topic for graph data. The success of contrastive learning and self-supervised learning in the unsupervised representation learning of structured data inspires similar attempts on the graph. The current unsupervised graph representation learning and pre-training using the contrastive loss are mainly based on the contrast between handcrafted augmented graph data. However, the graph data augmentation is still not well-explored due to the unpredictable invariance. In this paper, we propose a novel collaborative graph neural networks contrastive learning framework (CGCL), which uses multiple graph encoders to observe the graph. Features observed from different views act as the graph augmentation for contrastive learning between graph encoders, avoiding any perturbation to guarantee the invariance. CGCL is capable of handling both graph-level and node-level representation learning. Extensive experiments demonstrate the advantages of CGCL in unsupervised graph representation learning and the non-necessity of handcrafted data augmentation composition for graph representation learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge