Characterizing the dynamics of learning in repeated reference games

Paper and Code

Dec 16, 2019

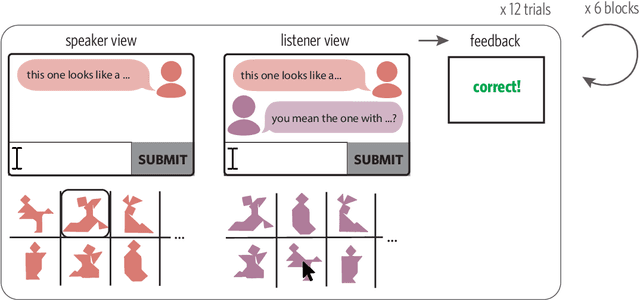

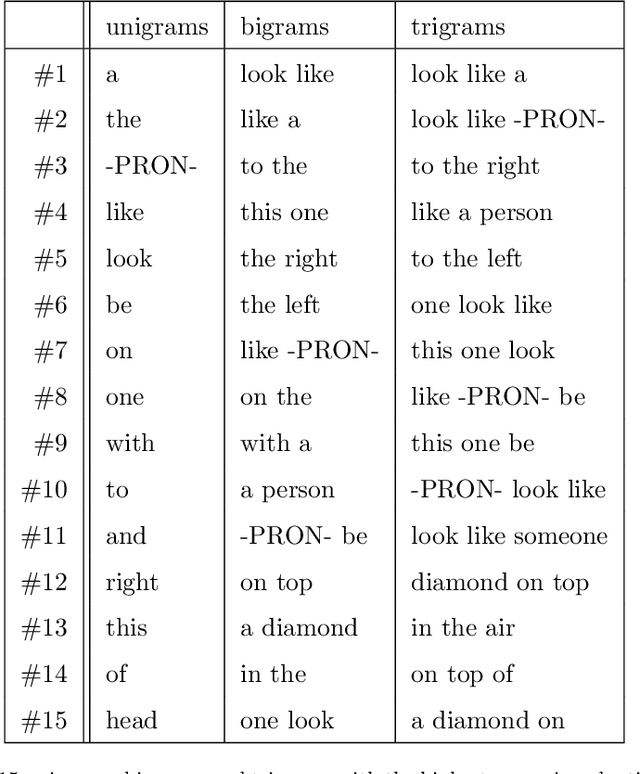

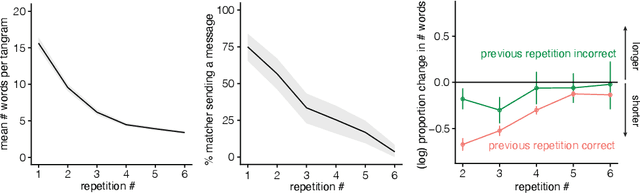

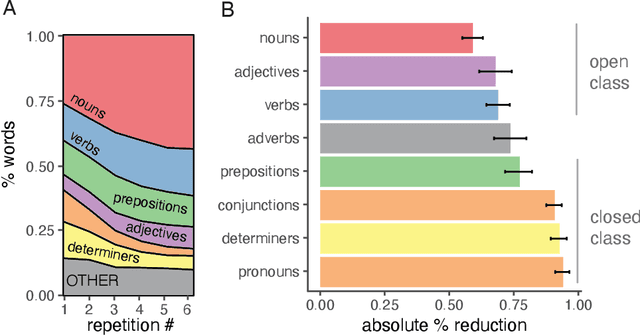

The language we use over the course of conversation changes as we establish common ground and learn what our partner finds meaningful. Here we draw upon recent advances in natural language processing to provide a finer-grained characterization of the dynamics of this learning process. We release an open corpus (>15,000 utterances) of extended dyadic interactions in a classic repeated reference game task where pairs of participants had to coordinate on how to refer to initially difficult-to-describe tangram stimuli. We find that different pairs discover a wide variety of idiosyncratic but efficient and stable solutions to the problem of reference. Furthermore, these conventions are shaped by the communicative context: words that are more discriminative in the initial context (i.e. that are used for one target more than others) are more likely to persist through the final repetition. Finally, we find systematic structure in how a speaker's referring expressions become more efficient over time: syntactic units drop out in clusters following positive feedback from the listener, eventually leaving short labels containing open-class parts of speech. These findings provide a higher resolution look at the quantitative dynamics of ad hoc convention formation and support further development of computational models of learning in communication.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge