Characterization of Constrained Continuous Multiobjective Optimization Problems: A Performance Space Perspective

Paper and Code

Feb 04, 2023

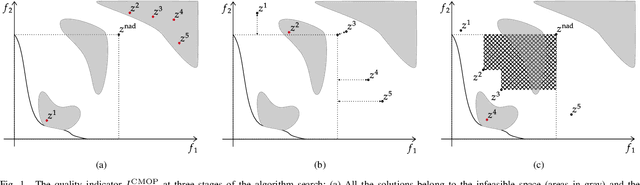

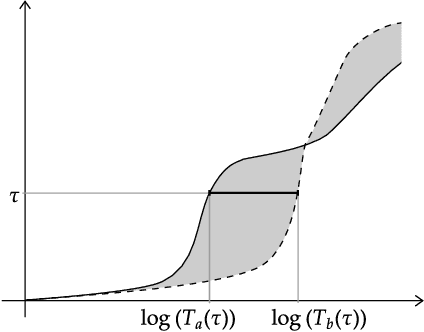

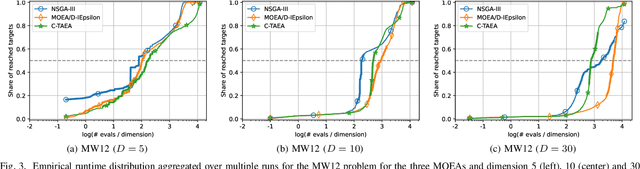

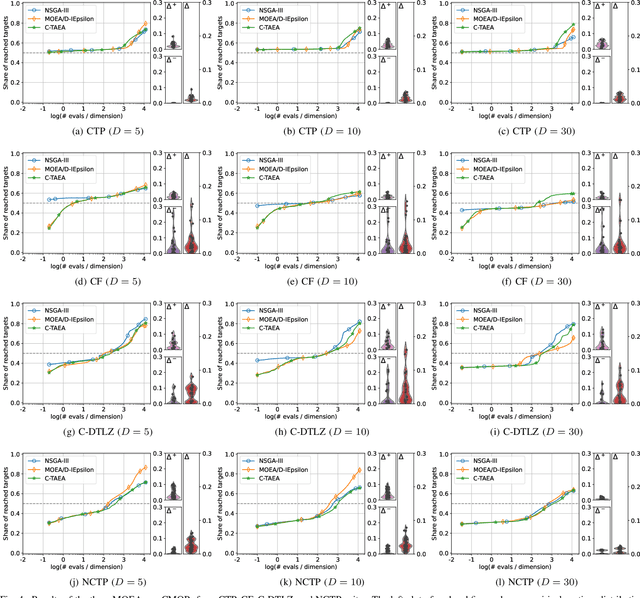

Constrained multiobjective optimization has gained much interest in the past few years. However, constrained multiobjective optimization problems (CMOPs) are still unsatisfactorily understood. Consequently, the choice of adequate CMOPs for benchmarking is difficult and lacks a formal background. This paper addresses this issue by exploring CMOPs from a performance space perspective. First, it presents a novel performance assessment approach designed explicitly for constrained multiobjective optimization. This methodology offers a first attempt to simultaneously measure the performance in approximating the Pareto front and constraint satisfaction. Secondly, it proposes an approach to measure the capability of the given optimization problem to differentiate among algorithm performances. Finally, this approach is used to contrast eight frequently used artificial test suites of CMOPs. The experimental results reveal which suites are more efficient in discerning between three well-known multiobjective optimization algorithms. Benchmark designers can use these results to select the most appropriate CMOPs for their needs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge