Chaos and Complexity from Quantum Neural Network: A study with Diffusion Metric in Machine Learning

Paper and Code

Nov 16, 2020

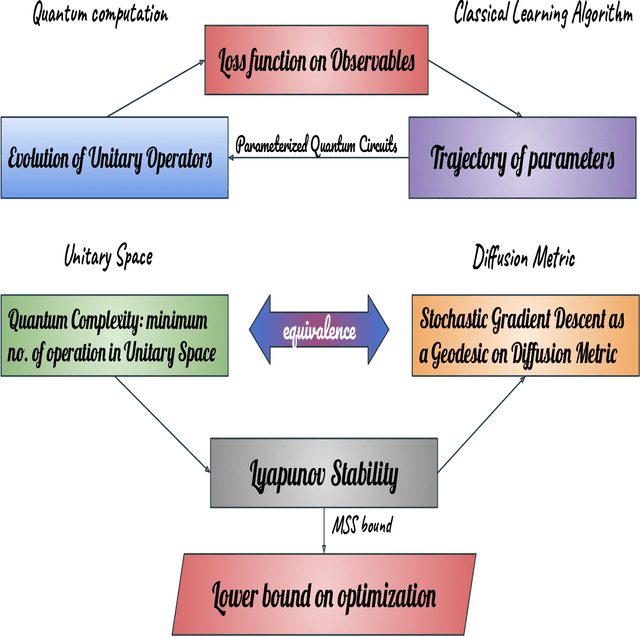

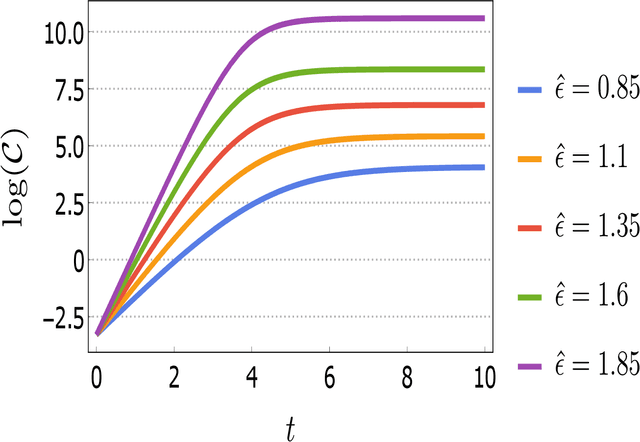

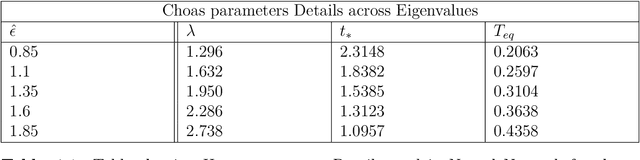

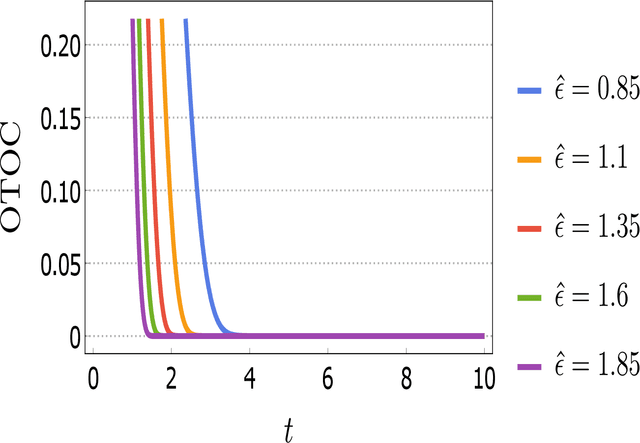

In this work, our prime objective is to study the phenomena of quantum chaos and complexity in the machine learning dynamics of Quantum Neural Network (QNN). A Parameterized Quantum Circuits (PQCs) in the hybrid quantum-classical framework is introduced as a universal function approximator to perform optimization with Stochastic Gradient Descent (SGD). We employ a statistical and differential geometric approach to study the learning theory of QNN. The evolution of parametrized unitary operators is correlated with the trajectory of parameters in the Diffusion metric. We establish the parametrized version of Quantum Complexity and Quantum Chaos in terms of physically relevant quantities, which are not only essential in determining the stability, but also essential in providing a very significant lower bound to the generalization capability of QNN. We explicitly prove that when the system executes limit cycles or oscillations in the phase space, the generalization capability of QNN is maximized. Moreover, a lower bound on the optimization rate is determined using the well known Maldacena Shenker Stanford (MSS) bound on the Quantum Lyapunov exponent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge