Challenges and Obstacles Towards Deploying Deep Learning Models on Mobile Devices

Paper and Code

May 06, 2021

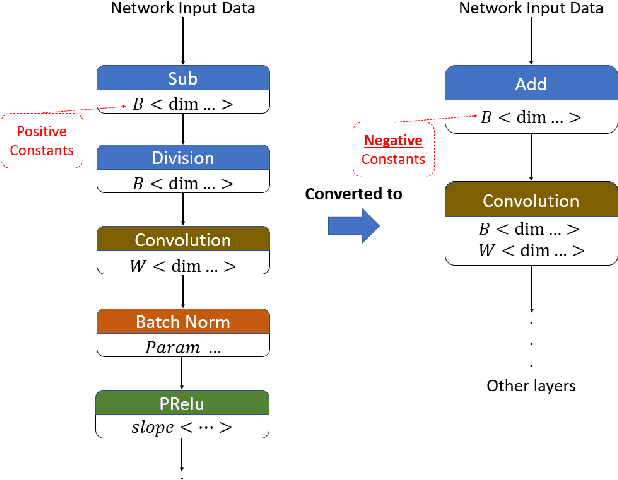

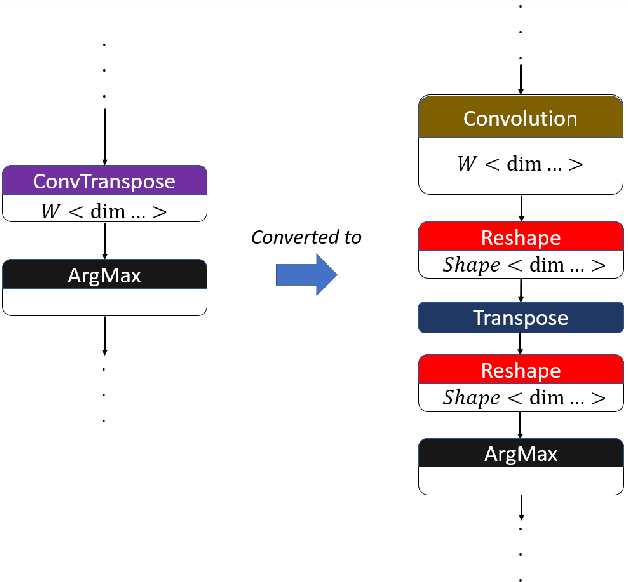

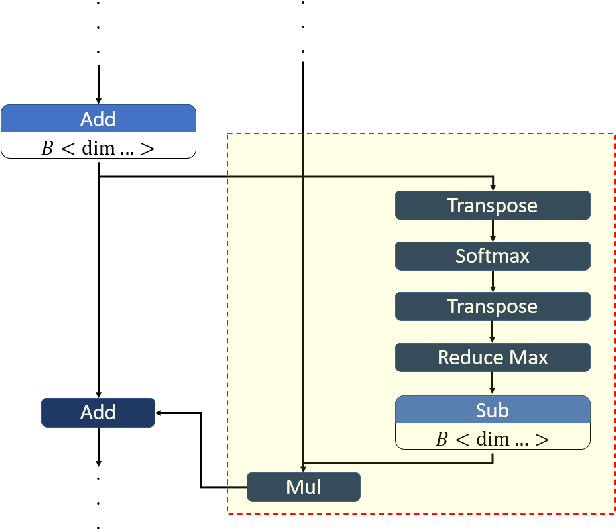

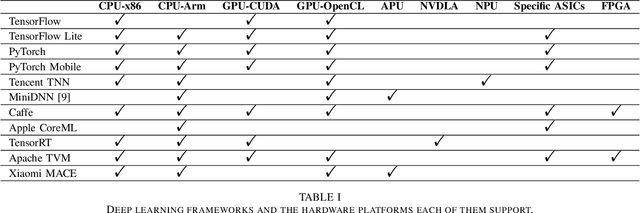

From computer vision and speech recognition to forecasting trajectories in autonomous vehicles, deep learning approaches are at the forefront of so many domains. Deep learning models are developed using plethora of high-level, generic frameworks and libraries. Running those models on the mobile devices require hardware-aware optimizations and in most cases converting the models to other formats or using a third-party framework. In reality, most of the developed models need to undergo a process of conversion, adaptation, and, in some cases, full retraining to match the requirements and features of the framework that is deploying the model on the target platform. Variety of hardware platforms with heterogeneous computing elements, from wearable devices to high-performance GPU clusters are used to run deep learning models. In this paper, we present the existing challenges, obstacles, and practical solutions towards deploying deep learning models on mobile devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge