Certifiable Machine Unlearning for Linear Models

Paper and Code

Jul 28, 2021

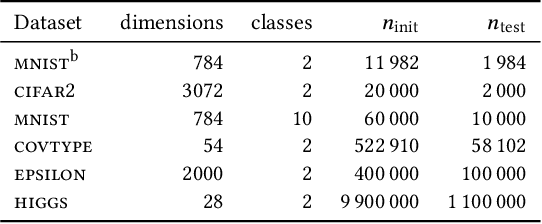

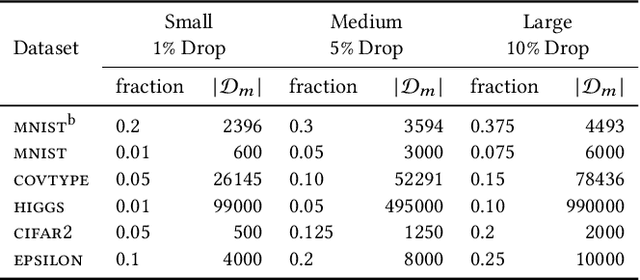

Machine unlearning is the task of updating machine learning (ML) models after a subset of the training data they were trained on is deleted. Methods for the task are desired to combine effectiveness and efficiency, i.e., they should effectively "unlearn" deleted data, but in a way that does not require excessive computation effort (e.g., a full retraining) for a small amount of deletions. Such a combination is typically achieved by tolerating some amount of approximation in the unlearning. In addition, laws and regulations in the spirit of "the right to be forgotten" have given rise to requirements for certifiability, i.e., the ability to demonstrate that the deleted data has indeed been unlearned by the ML model. In this paper, we present an experimental study of the three state-of-the-art approximate unlearning methods for linear models and demonstrate the trade-offs between efficiency, effectiveness and certifiability offered by each method. In implementing the study, we extend some of the existing works and describe a common ML pipeline to compare and evaluate the unlearning methods on six real-world datasets and a variety of settings. We provide insights into the effect of the quantity and distribution of the deleted data on ML models and the performance of each unlearning method in different settings. We also propose a practical online strategy to determine when the accumulated error from approximate unlearning is large enough to warrant a full retrain of the ML model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge