Cell-Free Latent Go-Explore

Paper and Code

Aug 31, 2022

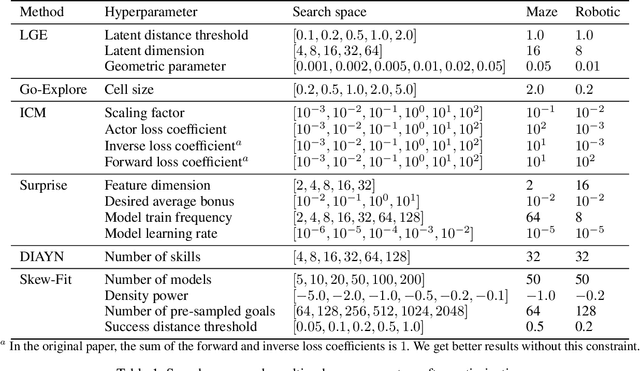

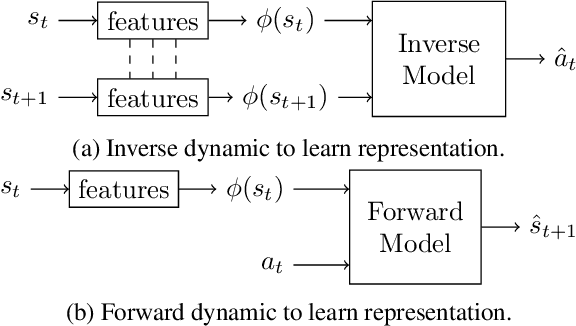

In this paper, we introduce Latent Go-Explore (LGE), a simple and general approach based on the Go-Explore paradigm for exploration in reinforcement learning (RL). Go-Explore was initially introduced with a strong domain knowledge constraint for partitioning the state space into cells. However, in most real-world scenarios, drawing domain knowledge from raw observations is complex and tedious. If the cell partitioning is not informative enough, Go-Explore can completely fail to explore the environment. We argue that the Go-Explore approach can be generalized to any environment without domain knowledge and without cells by exploiting a learned latent representation. Thus, we show that LGE can be flexibly combined with any strategy for learning a latent representation. We show that LGE, although simpler than Go-Explore, is more robust and outperforms all state-of-the-art algorithms in terms of pure exploration on multiple hard-exploration environments. The LGE implementation is available as open-source at https://github.com/qgallouedec/lge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge