Capturing the objects of vision with neural networks

Paper and Code

Sep 07, 2021

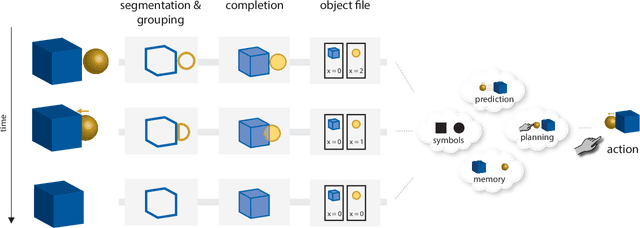

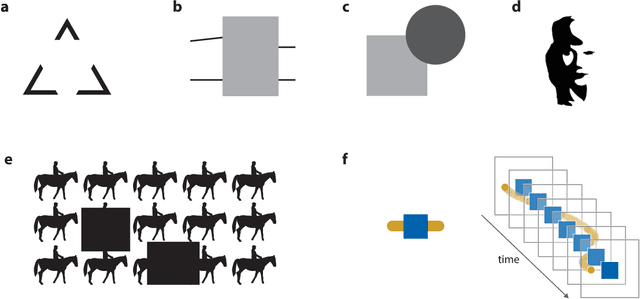

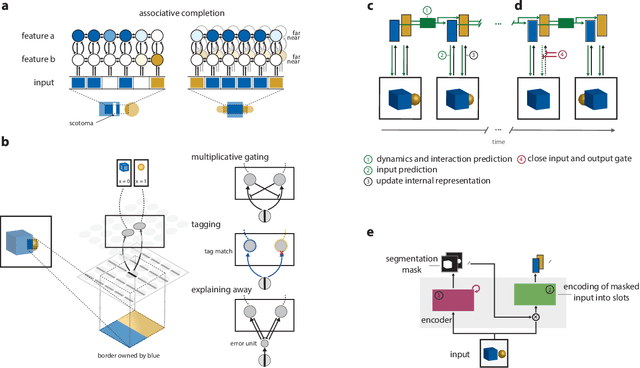

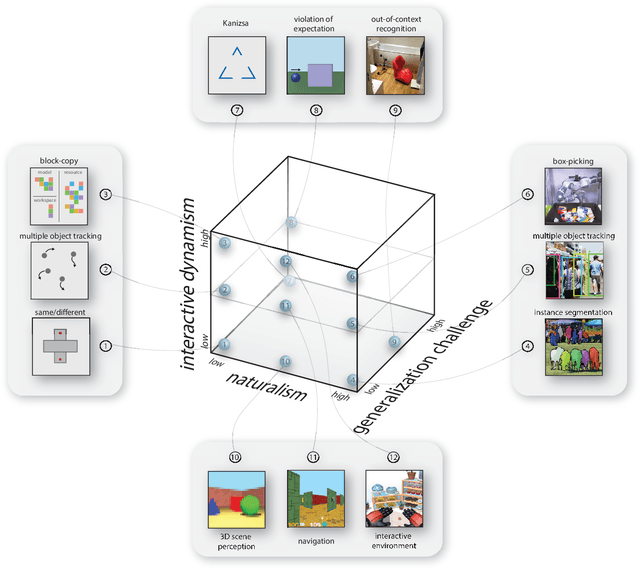

Human visual perception carves a scene at its physical joints, decomposing the world into objects, which are selectively attended, tracked, and predicted as we engage our surroundings. Object representations emancipate perception from the sensory input, enabling us to keep in mind that which is out of sight and to use perceptual content as a basis for action and symbolic cognition. Human behavioral studies have documented how object representations emerge through grouping, amodal completion, proto-objects, and object files. Deep neural network (DNN) models of visual object recognition, by contrast, remain largely tethered to the sensory input, despite achieving human-level performance at labeling objects. Here, we review related work in both fields and examine how these fields can help each other. The cognitive literature provides a starting point for the development of new experimental tasks that reveal mechanisms of human object perception and serve as benchmarks driving development of deep neural network models that will put the object into object recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge