Captioning Images with Novel Objects via Online Vocabulary Expansion

Paper and Code

Mar 06, 2020

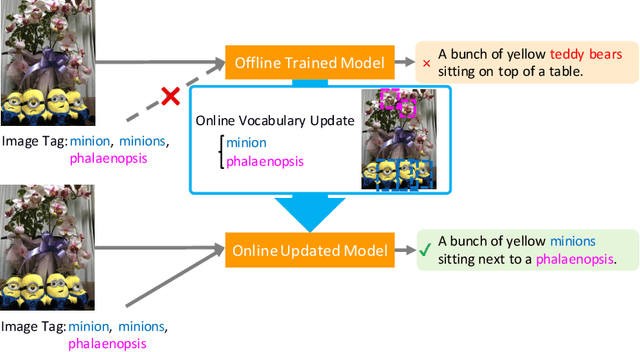

In this study, we introduce a low cost method for generating descriptions from images containing novel objects. Generally, constructing a model, which can explain images with novel objects, is costly because of the following: (1) collecting a large amount of data for each category, and (2) retraining the entire system. If humans see a small number of novel objects, they are able to estimate their properties by associating their appearance with known objects. Accordingly, we propose a method that can explain images with novel objects without retraining using the word embeddings of the objects estimated from only a small number of image features of the objects. The method can be integrated with general image-captioning models. The experimental results show the effectiveness of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge