BRIEF: Backward Reduction of CNNs with Information Flow Analysis

Paper and Code

Nov 01, 2018

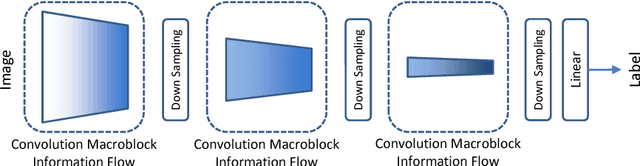

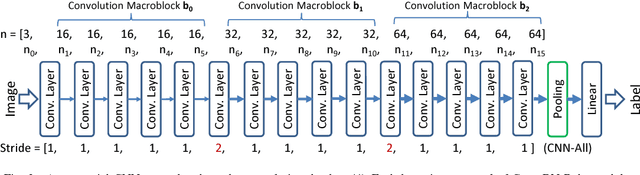

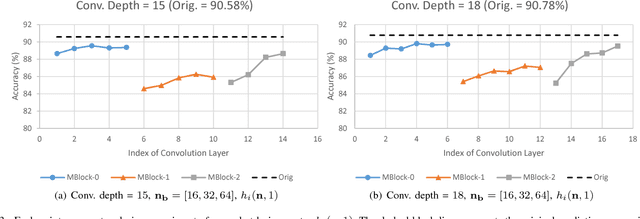

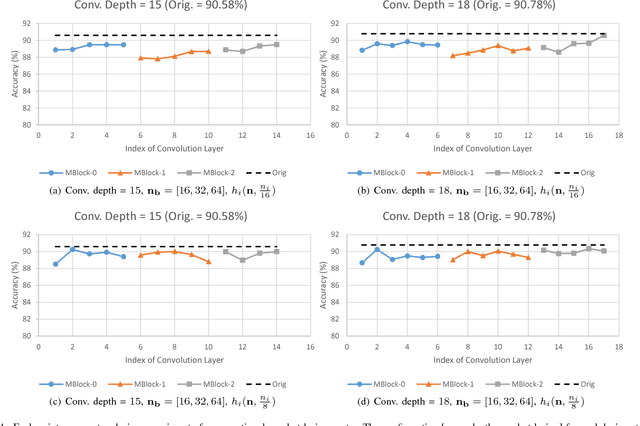

This paper proposes BRIEF, a backward reduction algorithm that explores compact CNN-model designs from the information flow perspective. This algorithm can remove substantial non-zero weighting parameters (redundant neural channels) of a network by considering its dynamic behavior, which traditional model-compaction techniques cannot achieve. With the aid of our proposed algorithm, we achieve significant model reduction on ResNet-34 in the ImageNet scale (32.3% reduction), which is 3X better than the previous result (10.8%). Even for highly optimized models such as SqueezeNet and MobileNet, we can achieve additional 10.81% and 37.56% reduction, respectively, with negligible performance degradation.

* IEEE Artificial Intelligence and Virtual Reality (IEEE AIVR) 2018

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge