Bone Structures Extraction and Enhancement in Chest Radiographs via CNN Trained on Synthetic Data

Paper and Code

Mar 20, 2020

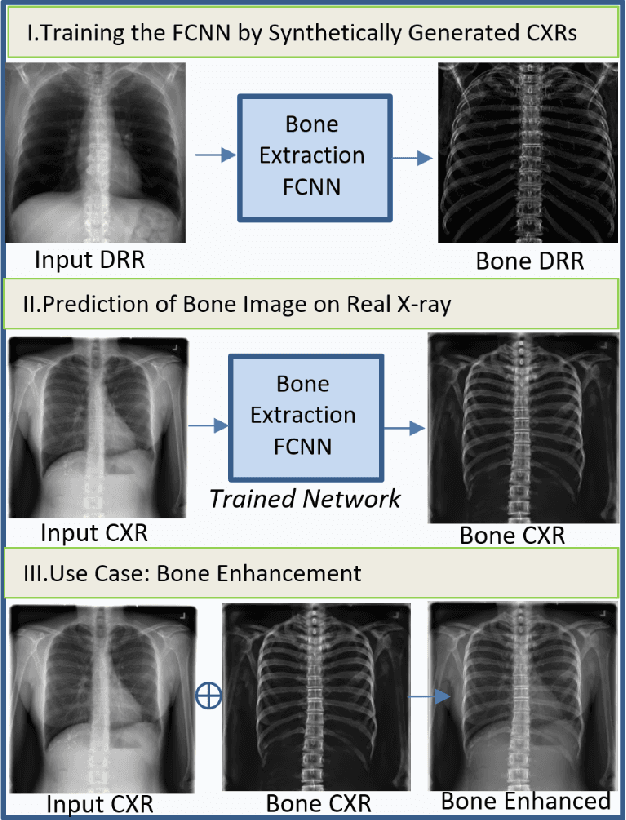

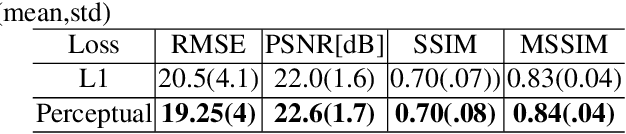

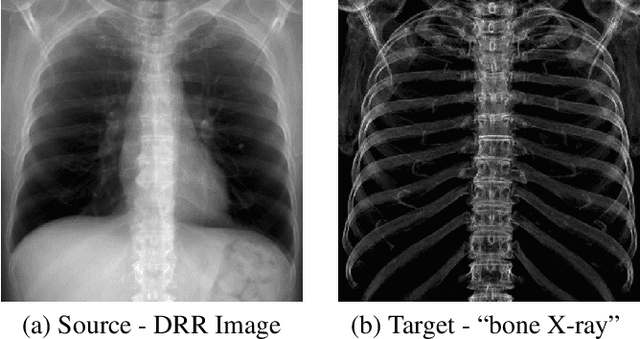

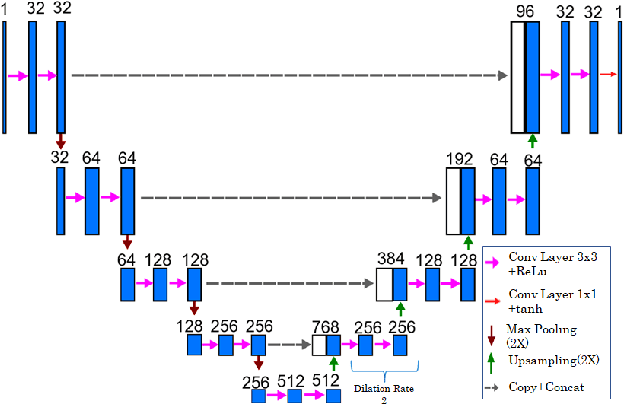

In this paper, we present a deep learning-based image processing technique for extraction of bone structures in chest radiographs using a U-Net FCNN. The U-Net was trained to accomplish the task in a fully supervised setting. To create the training image pairs, we employed simulated X-Ray or Digitally Reconstructed Radiographs (DRR), derived from 664 CT scans belonging to the LIDC-IDRI dataset. Using HU based segmentation of bone structures in the CT domain, a synthetic 2D "Bone x-ray" DRR is produced and used for training the network. For the reconstruction loss, we utilize two loss functions- L1 Loss and perceptual loss. Once the bone structures are extracted, the original image can be enhanced by fusing the original input x-ray and the synthesized "Bone X-ray". We show that our enhancement technique is applicable to real x-ray data, and display our results on the NIH Chest X-Ray-14 dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge