Biasing & Debiasing based Approach Towards Fair Knowledge Transfer for Equitable Skin Analysis

Paper and Code

May 16, 2024

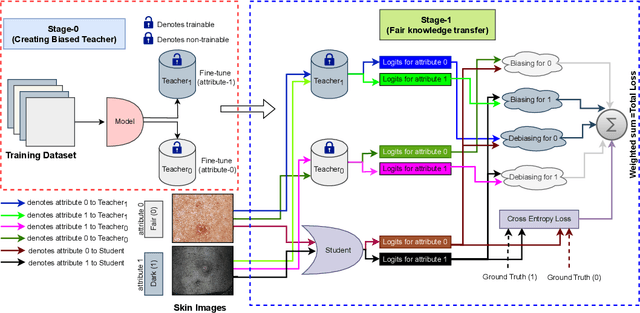

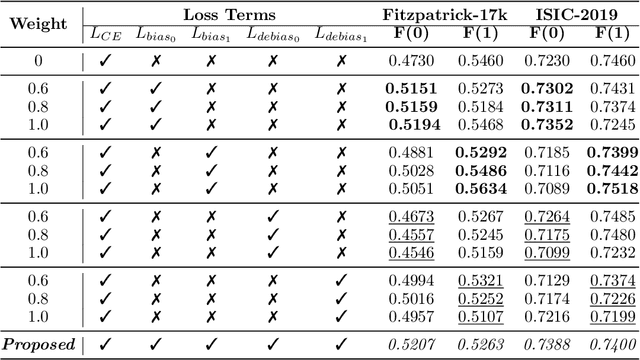

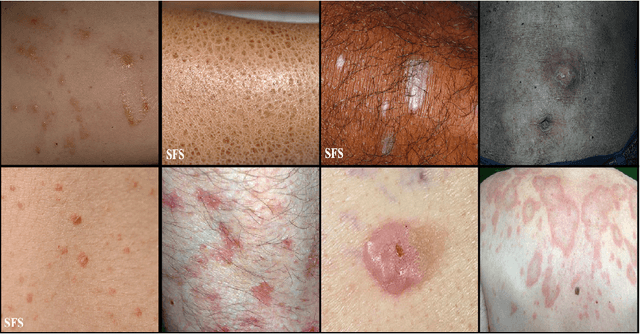

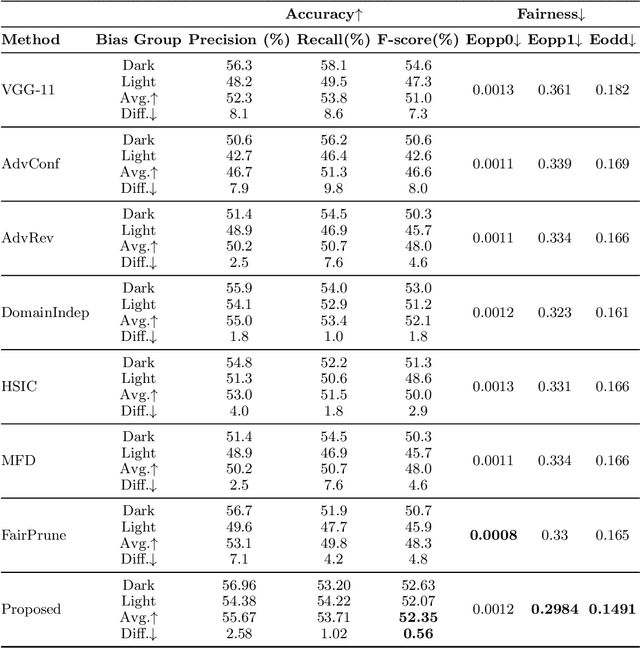

Deep learning models, particularly Convolutional Neural Networks (CNNs), have demonstrated exceptional performance in diagnosing skin diseases, often outperforming dermatologists. However, they have also unveiled biases linked to specific demographic traits, notably concerning diverse skin tones or gender, prompting concerns regarding fairness and limiting their widespread deployment. Researchers are actively working to ensure fairness in AI-based solutions, but existing methods incur an accuracy loss when striving for fairness. To solve this issue, we propose a `two-biased teachers' (i.e., biased on different sensitive attributes) based approach to transfer fair knowledge into the student network. Our approach mitigates biases present in the student network without harming its predictive accuracy. In fact, in most cases, our approach improves the accuracy of the baseline model. To achieve this goal, we developed a weighted loss function comprising biasing and debiasing loss terms. We surpassed available state-of-the-art approaches to attain fairness and also improved the accuracy at the same time. The proposed approach has been evaluated and validated on two dermatology datasets using standard accuracy and fairness evaluation measures. We will make source code publicly available to foster reproducibility and future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge