Bias-Variance Reduced Local SGD for Less Heterogeneous Federated Learning

Paper and Code

Feb 05, 2021

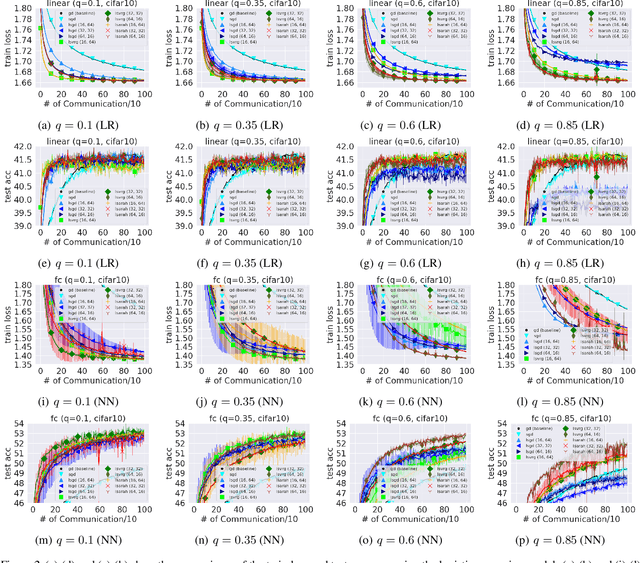

Federated learning is one of the important learning scenarios in distributed learning, in which we aim at learning heterogeneous local datasets efficiently in terms of communication and computational cost. In this paper, we study new local algorithms called Bias-Variance Reduced Local SGD (BVR-L-SGD) for nonconvex federated learning. One of the novelties of this paper is in the analysis of our bias and variance reduced local gradient estimators which fully utilize small second-order heterogeneity of local objectives and suggests to randomly pick up one of the local models instead of taking average of them when workers are synchronized. Under small heterogeneity of local objectives, we show that our methods achieve smaller communication complexity than both the previous non-local and local methods for general nonconvex objectives. Furthermore, we also compare the total execution time, that is the sum of total communication time and total computational time per worker, and show the superiority of our methods to the existing methods when the heterogeneity is small and single communication time is more time consuming than single stochastic gradient computation. Numerical results are provided to verify our theoretical findings and give empirical evidence of the superiority of our algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge