Beyond Pruning Criteria: The Dominant Role of Fine-Tuning and Adaptive Ratios in Neural Network Robustness

Paper and Code

Oct 19, 2024

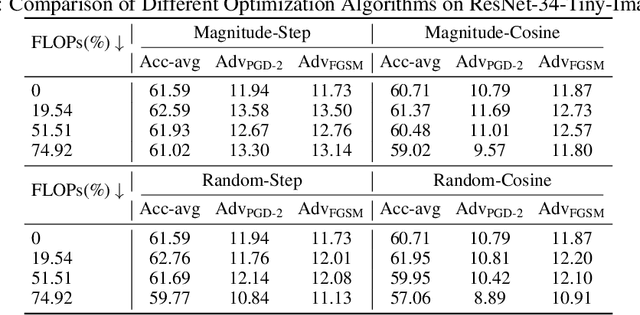

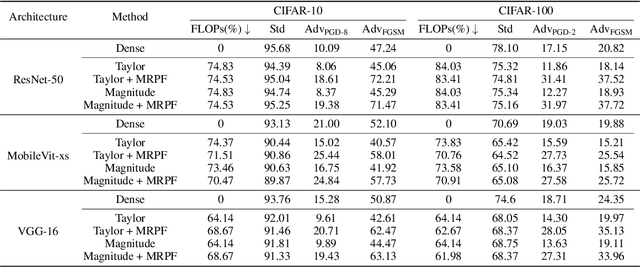

Deep neural networks (DNNs) excel in tasks like image recognition and natural language processing, but their increasing complexity complicates deployment in resource-constrained environments and increases susceptibility to adversarial attacks. While traditional pruning methods reduce model size, they often compromise the network's ability to withstand subtle perturbations. This paper challenges the conventional emphasis on weight importance scoring as the primary determinant of a pruned network's performance. Through extensive analysis, including experiments conducted on CIFAR, Tiny-ImageNet, and various network architectures, we demonstrate that effective fine-tuning plays a dominant role in enhancing both performance and adversarial robustness, often surpassing the impact of the chosen pruning criteria. To address this issue, we introduce Module Robust Sensitivity, a novel metric that adaptively adjusts the pruning ratio for each network layer based on its sensitivity to adversarial perturbations. By integrating this metric into the pruning process, we develop a stable algorithm that maintains accuracy and robustness simultaneously. Experimental results show that our approach enables the practical deployment of more robust and efficient neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge