BERT got a Date: Introducing Transformers to Temporal Tagging

Paper and Code

Oct 04, 2021

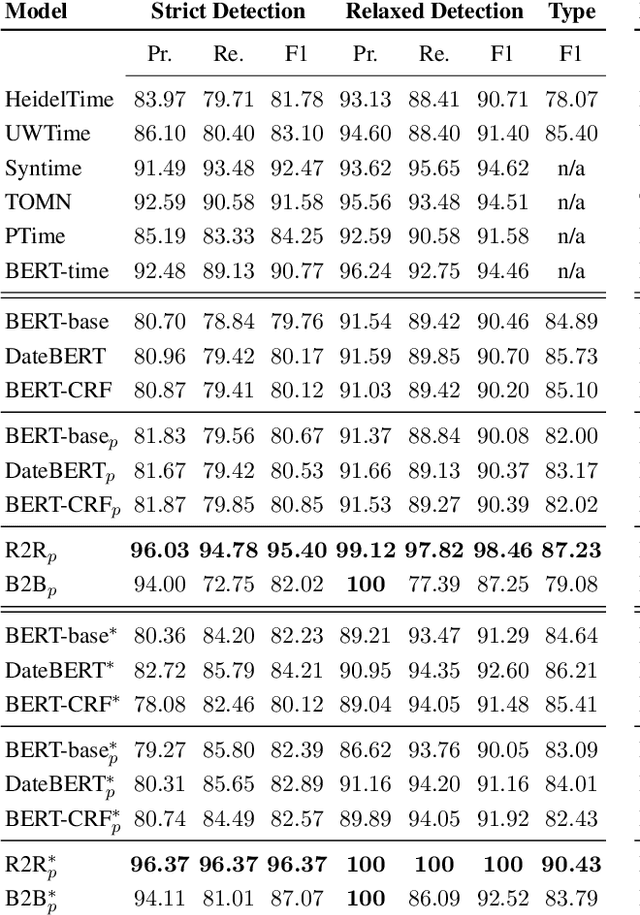

Temporal expressions in text play a significant role in language understanding and correctly identifying them is fundamental to various retrieval and natural language processing systems. Previous works have slowly shifted from rule-based to neural architectures, capable of tagging expressions with higher accuracy. However, neural models can not yet distinguish between different expression types at the same level as their rule-based counterparts. In this work, we aim to identify the most suitable transformer architecture for joint temporal tagging and type classification, as well as, investigating the effect of semi-supervised training on the performance of these systems. Based on our study of token classification variants and encoder-decoder architectures, we present a transformer encoder-decoder model using the RoBERTa language model as our best performing system. By supplementing training resources with weakly labeled data from rule-based systems, our model surpasses previous works in temporal tagging and type classification, especially on rare classes. Our code and pre-trained experiments are available at: https://github.com/satya77/Transformer_Temporal_Tagger

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge