Bayesian Online Meta-Learning with Laplace Approximation

Paper and Code

Apr 30, 2020

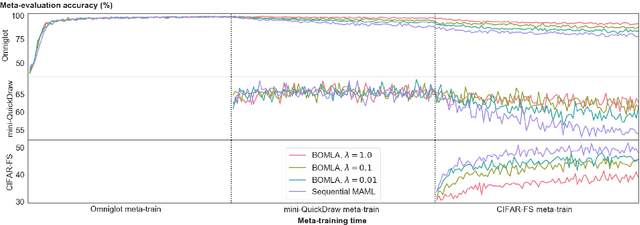

Neural networks are known to suffer from catastrophic forgetting when trained on sequential datasets. While there have been numerous attempts to solve this problem for large-scale supervised classification, little has been done to overcome catastrophic forgetting for few-shot classification problems. We demonstrate that the popular gradient-based few-shot meta-learning algorithm Model-Agnostic Meta-Learning (MAML) indeed suffers from catastrophic forgetting and introduce a Bayesian online meta-learning framework that tackles this problem. Our framework incorporates MAML into a Bayesian online learning algorithm with Laplace approximation. This framework enables few-shot classification on a range of sequentially arriving datasets with a single meta-learned model. The experimental evaluations demonstrate that our framework can effectively prevent forgetting in various few-shot classification settings compared to applying MAML sequentially.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge