Bayesian Kernel Regression for Functional Data

Paper and Code

Mar 17, 2025

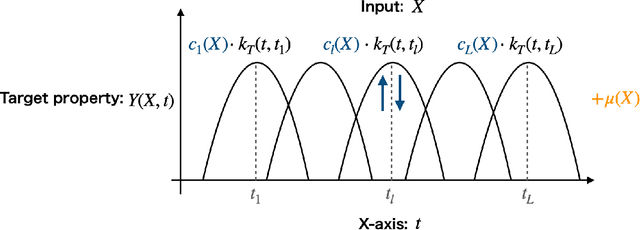

In supervised learning, the output variable to be predicted is often represented as a function, such as a spectrum or probability distribution. Despite its importance, functional output regression remains relatively unexplored. In this study, we propose a novel functional output regression model based on kernel methods. Unlike conventional approaches that independently train regressors with scalar outputs for each measurement point of the output function, our method leverages the covariance structure within the function values, akin to multitask learning, leading to enhanced learning efficiency and improved prediction accuracy. Compared with existing nonlinear function-on-scalar models in statistical functional data analysis, our model effectively handles high-dimensional nonlinearity while maintaining a simple model structure. Furthermore, the fully kernel-based formulation allows the model to be expressed within the framework of reproducing kernel Hilbert space (RKHS), providing an analytic form for parameter estimation and a solid foundation for further theoretical analysis. The proposed model delivers a functional output predictive distribution derived analytically from a Bayesian perspective, enabling the quantification of uncertainty in the predicted function. We demonstrate the model's enhanced prediction performance through experiments on artificial datasets and density of states prediction tasks in materials science.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge