Bandwidth-efficient distributed neural network architectures with application to body sensor networks

Paper and Code

Oct 14, 2022

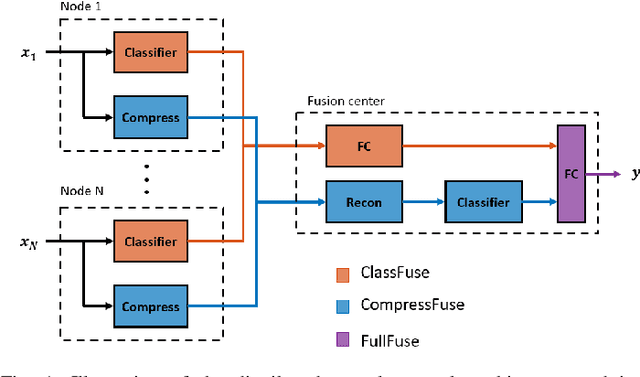

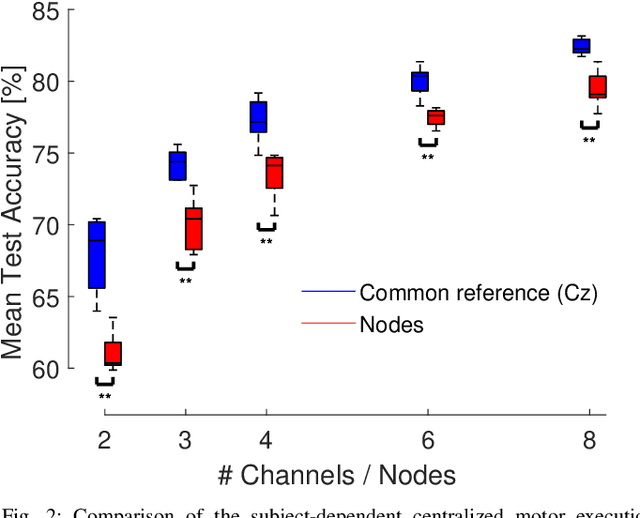

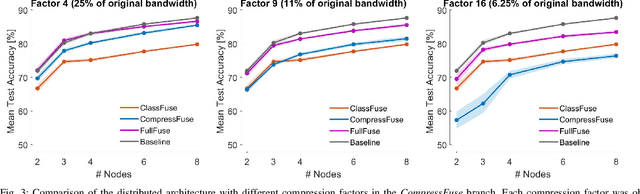

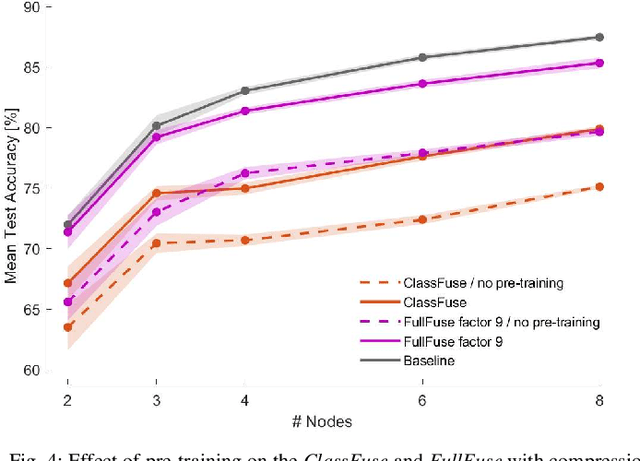

In this paper, we describe a conceptual design methodology to design distributed neural network architectures that can perform efficient inference within sensor networks with communication bandwidth constraints. The different sensor channels are distributed across multiple sensor devices, which have to exchange data over bandwidth-limited communication channels to solve, e.g., a classification task. Our design methodology starts from a user-defined centralized neural network and transforms it into a distributed architecture in which the channels are distributed over different nodes. The distributed network consists of two parallel branches of which the outputs are fused at the fusion center. The first branch collects classification results from local, node-specific classifiers while the second branch compresses each node's signal and then reconstructs the multi-channel time series for classification at the fusion center. We further improve bandwidth gains by dynamically activating the compression path when the local classifications do not suffice. We validate this method on a motor execution task in an emulated EEG sensor network and analyze the resulting bandwidth-accuracy trade-offs. Our experiments show that the proposed framework enables up to a factor 20 in bandwidth reduction with minimal loss (up to 2%) in classification accuracy compared to the centralized baseline on the demonstrated motor execution task. The proposed method offers a way to smoothly transform a centralized architecture to a distributed, bandwidth-efficient network amenable for low-power sensor networks. While the application focus of this paper is on wearable brain-computer interfaces, the proposed methodology can be applied in other sensor network-like applications as well.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge