AVR: Attention based Salient Visual Relationship Detection

Paper and Code

Mar 16, 2020

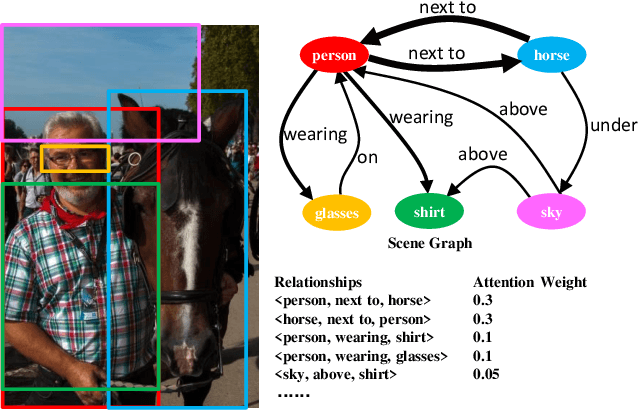

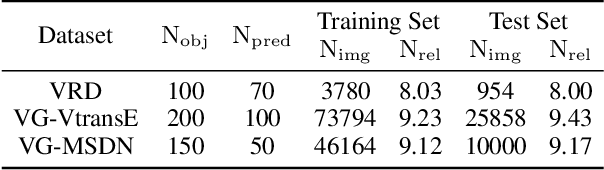

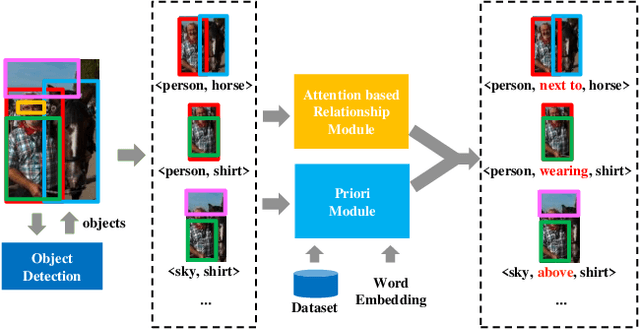

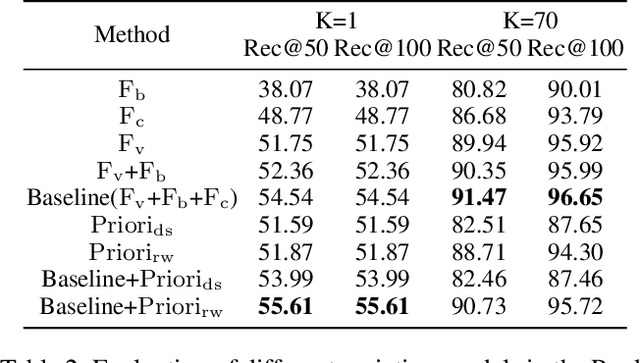

Visual relationship detection aims to locate objects in images and recognize the relationships between objects. Traditional methods treat all observed relationships in an image equally, which causes a relatively poor performance in the detection tasks on complex images with abundant visual objects and various relationships. To address this problem, we propose an attention based model, namely AVR, to achieve salient visual relationships based on both local and global context of the relationships. Specifically, AVR recognizes relationships and measures the attention on the relationships in the local context of an input image by fusing the visual features, semantic and spatial information of the relationships. AVR then applies the attention to assign important relationships with larger salient weights for effective information filtering. Furthermore, AVR is integrated with the priori knowledge in the global context of image datasets to improve the precision of relationship prediction, where the context is modeled as a heterogeneous graph to measure the priori probability of relationships based on the random walk algorithm. Comprehensive experiments are conducted to demonstrate the effectiveness of AVR in several real-world image datasets, and the results show that AVR outperforms state-of-the-art visual relationship detection methods significantly by up to $87.5\%$ in terms of recall.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge