Average Margin Regularization for Classifiers

Paper and Code

Oct 14, 2018

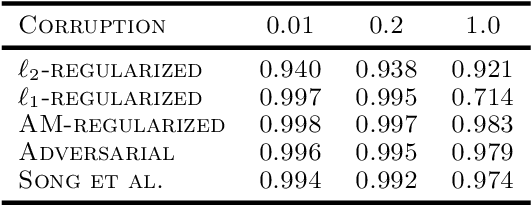

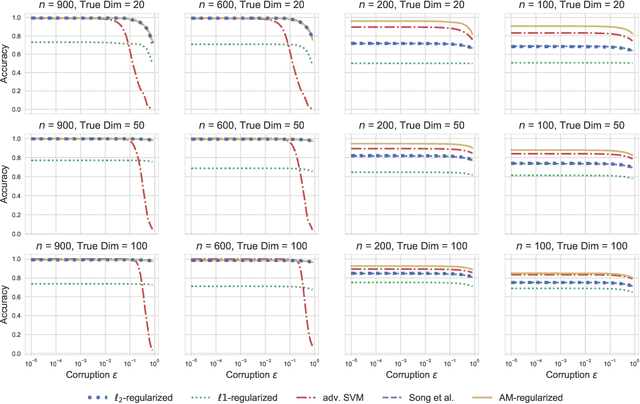

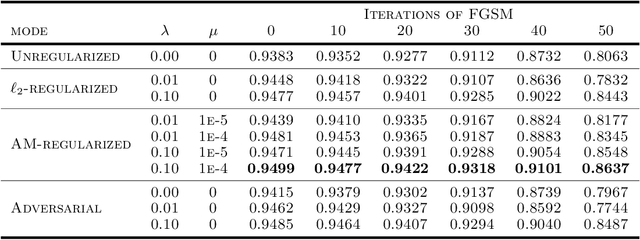

Adversarial robustness has become an important research topic given empirical demonstrations on the lack of robustness of deep neural networks. Unfortunately, recent theoretical results suggest that adversarial training induces a strict tradeoff between classification accuracy and adversarial robustness. In this paper, we propose and then study a new regularization for any margin classifier or deep neural network. We motivate this regularization by a novel generalization bound that shows a tradeoff in classifier accuracy between maximizing its margin and average margin. We thus call our approach an average margin (AM) regularization, and it consists of a linear term added to the objective. We theoretically show that for certain distributions AM regularization can both improve classifier accuracy and robustness to adversarial attacks. We conclude by using both synthetic and real data to empirically show that AM regularization can strictly improve both accuracy and robustness for support vector machine's (SVM's) and deep neural networks, relative to unregularized classifiers and adversarially trained classifiers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge