Autonomous discovery of the goal space to learn a parameterized skill

Paper and Code

May 19, 2018

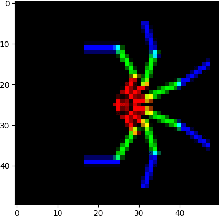

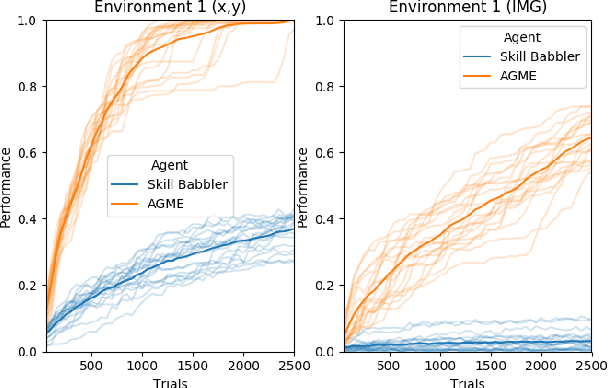

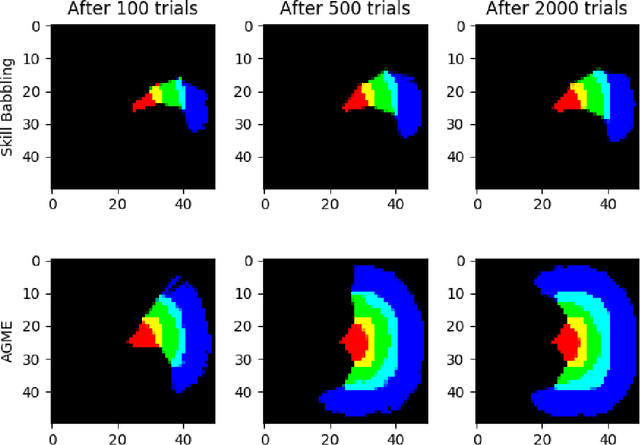

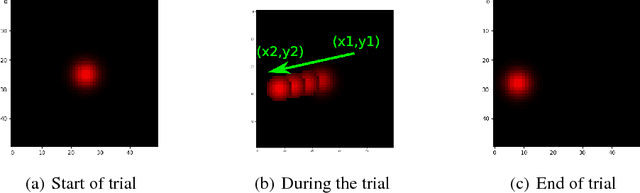

A parameterized skill is a mapping from multiple goals/task parameters to the policy parameters to accomplish them. Existing works in the literature show how a parameterized skill can be learned given a task space that defines all the possible achievable goals. In this work, we focus on tasks defined in terms of final states (goals), and we face on the challenge where the agent aims to autonomously acquire a parameterized skill to manipulate an initially unknown environment. In this case, the task space is not known a priori and the agent has to autonomously discover it. The agent may posit as a task space its whole sensory space (i.e. the space of all possible sensor readings) as the achievable goals will certainly be a subset of this space. However, the space of achievable goals may be a very tiny subspace in relation to the whole sensory space, thus directly using the sensor space as task space exposes the agent to the curse of dimensionality and makes existing autonomous skill acquisition algorithms inefficient. In this work we present an algorithm that actively discovers the manifold of the achievable goals within the sensor space. We validate the algorithm by employing it in multiple different simulated scenarios where the agent actions achieve different types of goals: moving a redundant arm, pushing an object, and changing the color of an object.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge