Automatic Tuning of Tensorflow's CPU Backend using Gradient-Free Optimization Algorithms

Paper and Code

Sep 13, 2021

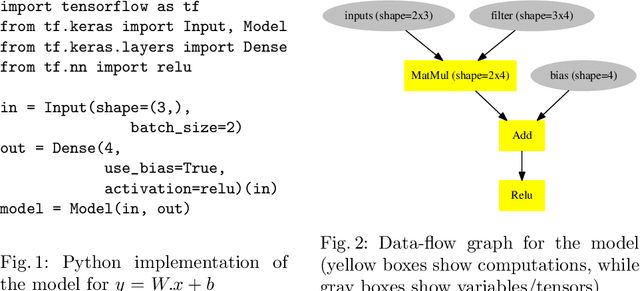

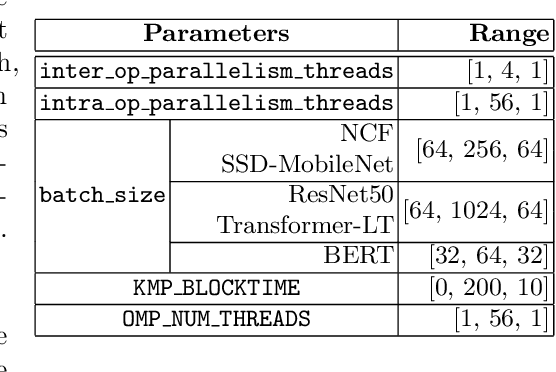

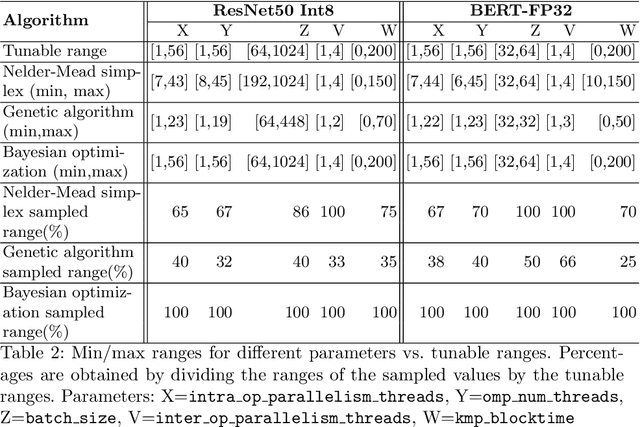

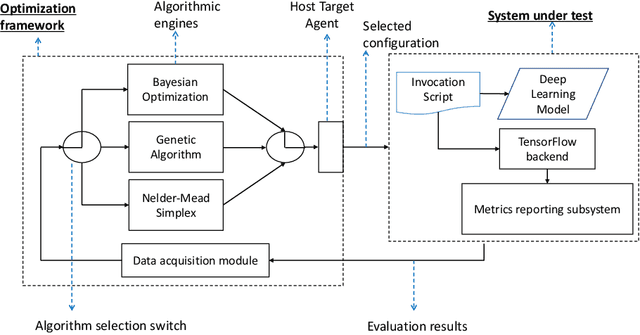

Modern deep learning (DL) applications are built using DL libraries and frameworks such as TensorFlow and PyTorch. These frameworks have complex parameters and tuning them to obtain good training and inference performance is challenging for typical users, such as DL developers and data scientists. Manual tuning requires deep knowledge of the user-controllable parameters of DL frameworks as well as the underlying hardware. It is a slow and tedious process, and it typically delivers sub-optimal solutions. In this paper, we treat the problem of tuning parameters of DL frameworks to improve training and inference performance as a black-box optimization problem. We then investigate applicability and effectiveness of Bayesian optimization (BO), genetic algorithm (GA), and Nelder-Mead simplex (NMS) to tune the parameters of TensorFlow's CPU backend. While prior work has already investigated the use of Nelder-Mead simplex for a similar problem, it does not provide insights into the applicability of other more popular algorithms. Towards that end, we provide a systematic comparative analysis of all three algorithms in tuning TensorFlow's CPU backend on a variety of DL models. Our findings reveal that Bayesian optimization performs the best on the majority of models. There are, however, cases where it does not deliver the best results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge